Nutanix Agentic AI bids to stoke up enterprise AI factories

Nutanix is positioning its agentic AI solution as a full software stack, purpose-built for real-world enterprise deployments.

The company thinks we have now hit a tipping point where the barrier to success is no longer the model or building individual agents, but the complexity of managing the infrastructure required to securely run thousands of agents at scale.

Nutanix (as an infrastructure specialist) would be likely to say that, but, in fairness, this is absolutely the narrative that we’re hearing from the rest of the industry.

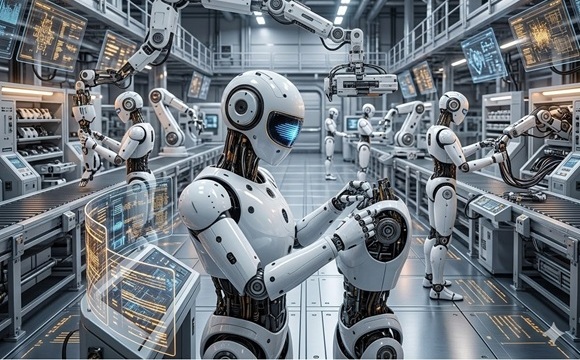

Now focused on providing infrastructure and platform teams with the tools to “build and operate AI factories” today, Nutanix says it will focus on performance, security and compliance with sovereignty requirements.

What is an AI factory?

We can define an AI factory as a collection of platform-level and infrastructure-level technologies and toolsets that work to continuously ingest data and train machine learning models on a continuous basis. Rather than coal or any other form of fuel, this factory runs on data and its machinery is aligned to process intelligence, predictions and autonomous decisions through interconnected compute functions, data pipelines and algorithmic logic. The AI factory ultimately delivers and deploys AI-powered outputs with the scope to scale once any given AI service enjoys widespread user uptake.

We’re now hearing firms talk about how data scientists and agentic AI developers expect easy access to tools and services to run and fine-tune models, build agents and securely connect them to enterprise data… and this is the channel that Nutanix aims to flow in.

“Enterprise AI becomes economically viable when the infrastructure around it is engineered for scale. By integrating orchestration, models and data services into a single stack, Nutanix is moving enterprise AI from isolated agents to operational ‘AI factories’,” said Mark Vigoroso, founder & CEO of B2B tech catalyst, The Enterprise Edge.

Thomas Cornely, executive vice president of product management at Nutanix says that in contrast to AI infrastructure for model training (which was optimised to run ‘one big job’), we need to realise that production agentic AI infrastructure needs to handle scale and high rates of change for thousands of AI services, agents and concurrent users and developers.

Cloud ops model for AI factories

Nutanix Agentic AI extends AHV hypervisor, Flow Virtual Networking, Nutanix Kubernetes Platform and Nutanix Enterprise AI to create a cloud operating model for enterprise AI factories, enabling infrastructure and platform teams to simply build, operate and govern.

According to Cornley, the technology on offer here integrates with Nvidia AI Enterprise at the Agent Builder layer and orchestrates the Nvidia-certified ecosystem of AI factories for supported configurations. It provides dynamic, multiuser AI environments to build, run and protect agentic AI applications with a full suite of infrastructure orchestration and security software coupled with AI Platform Services (PaaS) and Models-as-a-Service (MaaS) for data scientists and agentic AI developers.

“Nutanix’s Agentic AI stack removes much of the infrastructure friction that can slow down enterprise AI projects. By bringing the layers together – from Models-as-a-Service at the top, to an AI platform built on a standardised Kubernetes distribution, down to GPU-aware hypervisors and DPU-accelerated networking – organisations get a more coherent AI stack, enabling AI factories that deliver strong performance and security while driving down the cost per token,” said Steve McDowell, chief analyst, Nand Research.

Nutanix says its Agentic AI (CAPS used to denote branded service) reduces complexity, delivers optimised performance and security and is designed to enable lower, predictable token costs by providing the agentic AI services and a Kubernetes platform.

This AI PaaS and Kubernetes native software layer consists of:

- An Advanced AI Gateway and Model-as-a-Service: The latest release of Nutanix Enterprise AI (NAI), version 2.6, now includes an AI Gateway service for unified policy control over cloud-hosted and private LLMs. New support for the Model Context Protocol (MCP) server and Fine Tuning extends its existing robust MaaS capabilities to enable agents to securely connect to enterprise tools and data sources. NAI also now includes support for the NVIDIA Nemotron family of open-source AI models, datasets and training tools designed to help developers build agentic AI systems that can reason, securely access tools and complete complex multistep tasks independently.

- An Open Kubernetes Platform With a Rich AI Catalogue: Nutanix simplifies the path to Agentic AI by extending its CNCF-compliant Nutanix Kubernetes Platform with a rich catalog of pre-built open source AI developer tools, including Notebooks, Vector Databases, MLOps workflow engines and Agentic frameworks. Because it is fully integrated with Nvidia AI Enterprise software, developers can instantly deploy Nvidia NIM microservices, including Nemotron, to accelerate the development of high-performance AI applications in production.

Also, here we find infrastructure optimisation and security. In the early access version of Nvidia topology-aware AHV, the Nutanix AHV hypervisor has been enhanced to automatically optimise allocation of physical resources to virtual machines on GPU-dense servers and help maximise performance.

The Nutanix Flow Virtual Networking service has been enhanced to offload the network dataplane to Nvidia BlueField, delivering high-performance networking while reducing host CPU and memory consumption. These enhanced capabilities bring all the benefits of virtual machines for workload and tenant isolation, day 2 operations and infrastructure resilience to Agentic AI workloads with maximum performance, security and resource utilisation to help achieve a lower cost per token.

Foundational data services for AI

Agentic AI applications require foundational Data Services. As a solution built on the Nvidia AI Data Platform reference design, Nutanix Unified Storage delivers linearly scalable read/write performance for thousands of GPU clients. By providing a high-capacity tier for KV Cache offloading and support for S3 over RDMA and NFS over RDMA, Nutanix provides a scalable, low-latency data fabric that maximises GPU efficiency across all enterprise AI workloads.

The Nutanix Agentic AI service works in line with Nvidia-certified AI factories and users can deploy AI factories on hardware from Cisco, Dell and Supermicro, supported with joint validation by Nutanix and Nvidia.