Boost Security fires turbo into developer security to secure AI-driven programming

Developers need a singular solution for testing, posture management, secure AI-development and compliance that works within their existing workflow – and they need a service that isn’t bolted together from antiquated tools.

It’s rare to find an enterprise software organisation that addresses developers directly and tells it straight, right from the get-go… the above statement is the first line users will read on Boost Security’s home pages.

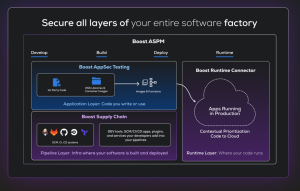

The company has this month detailed its new Boost Security Developer Endpoint Security, a new platform designed to secure the rapidly expanding attack surface created by AI-powered software development.

Developer machines & coding agents

The platform gives security teams visibility and control over developer machines, coding agents and AI-generated code, helping prevent supply chain attacks, credential leaks and insecure code before it reaches the repository.

Why are AI coding agents such a risk?

The industry broadly agrees that AI coding agents are increasing developer productivity. Among the most popular tools are GitHub Copilot, Cursor (an AI-native IDE recognised for its “deep codebase reasoning” capabilities) as well as autonomous agents like Devin and Claude Code. All worthy in their own right (but with caveats at various levels) these tools are capable of independently navigating repositories to fix complex bugs and execute multi-file refactors.

That’s the good part.

Risk factors

The not-so-good part of this reality is that AI coding agents can indeed introduce new security risks and this is often down to the fact that, as a result of using them, developers are installing plugins, MCP servers, IDE extensions and packages recommended by AI agents, meaning that “credentials accumulate” across configuration files, environment variables and local machines.

Why is credential accumulation a risk?

Sometimes known as credential sprawl, credentials accumulate as a result of the use of AI coding agents because the modularity that this pick-and-mix of agent-based (sometimes vibe coding-driven) activity means that centralised security control becomes slack (or broken) because the new code blocks, libraries and functions that a coding agents drives menas that the codebase is essentially connecting a (often complex) web of external services, each of which needs its own key to function. Worse perhaps, developers in these scenarios are often driven to create temporary keys to grant access to authenticate newly vibed services… and these credentials are soon forgotten about and end up bloating the codebase.

At the same time, AI systems can generate large volumes of code faster than organisations can verify it against secure coding standards or approved dependencies. The challenge is that security teams currently have little visibility into what developers and coding agents are running locally.

Boost Security Developer Endpoint Security has been engineered to address this gap by securing the developer environment directly, embedding protection into the tools, agents and workflows where code is created rather than waiting to scan code after it enters the repository.

“AI coding agents are fundamentally changing how software gets built, but security has largely remained focused on scanning code after the fact,” said Zaid Al Hamami, CEO and founder, Boost Security. “Developer Endpoint Security moves protection upstream. It secures the developer machine, governs the coding agent, and ensures safer code is generated from the start.”

Al Hamami says that Boost Security Developer Endpoint Security provides security teams with centralised visibility and governance across developer environments while enabling developers to identify and remediate issues without slowing development velocity.

Complete AI development workflow

The platform secures the full AI development workflow, from the moment a prompt is sent to a coding agent to the moment code is committed.

The platform secures the full AI development workflow, from the moment a prompt is sent to a coding agent to the moment code is committed.

Key capabilities include Developer Endpoint Visibility, a technology element that works to continuously discover coding agents, MCP servers, AI models, IDE extensions, browser extensions, packages and other development artifacts across the “developer fleet” (a term here apparently coined by Boost, but yes, it does make sense)… and so providing security teams with a real-time inventory of the tools developers and agents are using.

Developer Endpoint Safety identifies exposed credentials across dotfiles (hidden configuration files used to customise software settings and environments on Unix-based systems), configuration directories and environment variables while flagging machine configurations that increase the impact of a compromise.

Veto for unvetted connections

A further service, Coding Agent Safety ensures coding agents only run with approved MCP servers, plugins, and skills while preventing unvetted connections and configuration drift from organisational security policies.

Secure Agentic Code Generation embeds guardrails into the coding agent workflow so generated code follows organisational secure coding guidelines, uses approved libraries and is analysed and remediated before being committed.

Supply Chain Security evaluates packages, extensions, MCP servers and other components for malware, typosquatting, exploitable vulnerabilities, end-of-life dependencies and other indicators of compromise. Data Leakage Prevention scans outbound prompts before they reach external LLMs to detect and mask credentials, API keys and sensitive data.

Intelligent Security Remediation: Combines organisational context with vulnerability analysis to deliver AI-assisted fixes aligned with internal architecture patterns and compliance requirements. Finally, for now, Centralized Policy and Enforcement allows security teams to define secure coding standards, allowlists and denylists that apply consistently across both human-written and AI-generated code.