BlueRock forges Trust Context Engine to help developers control agentic systems

BlueRock is a company known for its provision of observability, context and control for agentic AI systems operating in production.

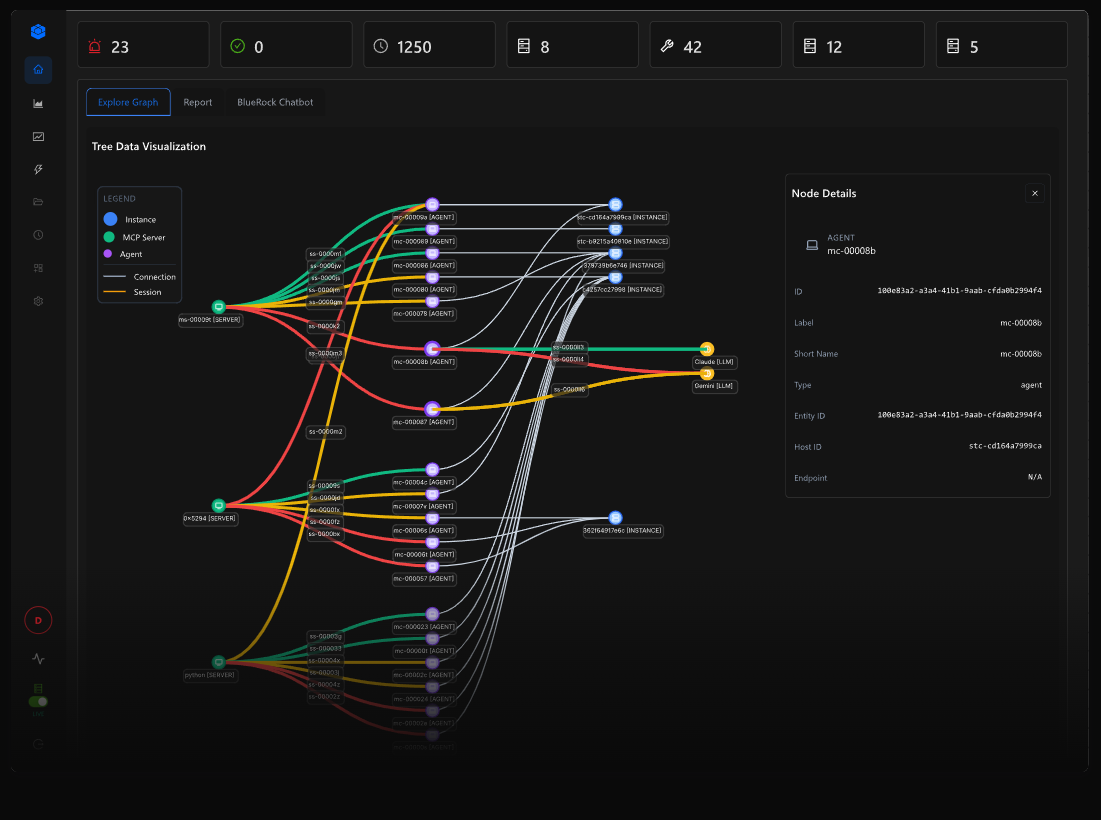

The platform itself is designed to helps developers and data science teams understand how agent decisions propagate across tools, MCP servers and wider systems while enabling the scalable operation of agent-driven workflows.

Now looking to enable developers with more managed control of agentic systems, BlueRock has announced its Trust Context Engine, a context layer for what the company calls the “agentic action path” that helps teams move faster while making better decisions about how agents interact across tools, MCP servers and connected components.

From code to runtime

Reminding us that control has shifted from code to runtime, so this means that without visibility into execution, teams can’t fully understand or trust agent behaviour. BlueRock says its platform shows what agents actually do to give teams the trust context to decide what agents should do.

“Agentic systems are changing how software behaves. Instead of following predefined code paths, agents interpret context, select tools and generate execution dynamically at runtime across connected components. Teams must move fast while understanding which components are safe, how they behave and how decisions propagate,” notes the company, in a technical statement.

The Trust Context Engine classifies each action an agent executes – such as what capability was invoked, what component was involved and what downstream effect occurred.

Build, experiment… and run agents

The Trust Context Engine provides the missing context layer between execution and understanding, attaching trust context for each step of the Agentic Action Path. This trust context includes component metadata, trust signals and runtime behaviour – giving teams the information needed to evaluate what agents are doing and make better decisions about what to run in production.

As agents execute, BlueRock attaches identifiers, tool and capability metadata, MCP server attributes, ownership signals, tool classification, access patterns and observed runtime behaviour to each step – creating a unified end-to-end view of execution.

Teams use the Trust Context Engine to build, validate and promote agentic workflows with confidence – connecting to trusted MCP servers, observing execution in practice and deploying only what is verified and appropriate for production. Teams can consume Trust Context signals directly in their workflows, using them to inform automation, approvals, policy decisions and runtime controls.

“AI changed how we write software. Agentic systems change how software behaves,” said Bob Tinker, CEO of BlueRock. “Developers want to move fast and consume capabilities, but they also need to understand what they’re connecting to and how those systems behave. The Trust Context Engine gives teams the context to make better decisions and the visibility to operate with confidence.”

This trust context spans the full execution path – from model decision to tool invocation to downstream system impact — giving teams a real-time view of how agent behaviour unfolds. Teams can also see which components are trusted and adopted across the ecosystem, helping them decide what to build with, what to allow and what to run in production.

How Trust Context Engine works

Developers: Build agents connected to trusted MCP servers, know which servers and tools are safe to build with. They then ship agentic workflows that are validated and ready for others to adopt with confidence.

DevOps and platform teams: Plug Trust Context signals into CI/CD automation to evaluate both MCP servers and agent workflows. Trust Context signals help prioritise high-impact activity and promotion decisions in production.

Security teams: Define trusted components and boundaries, use Trust Context signals to prioritise risk and feed that context into policy and control workflows before unsafe actions execute.

MCP server builders: Understand how your MCP is rated and used, identify vulnerabilities and exposure in order to improve trust posture so more teams can confidently adopt and build with it.

The Trust Context Engine is now powered by two sources of context: curated MCP trust data from the MCP Trust Registry and real-time execution signals captured by BlueRock sensors.

The MCP Trust Registry gathers MCP trust data across public MCP servers – including capability exposure, ownership signals, tool classification and trust attributes. At runtime, BlueRock sensors capture additional signals such as tool usage, access patterns and downstream system impact. Together, these inputs are combined in the Trust Context Engine to create “trust context” that can be used across development and production workflows. As agents interact with MCP servers, BlueRock attaches this combined context directly to each interaction. Teams can see which MCP servers are used, what capabilities they expose and how those capabilities are exercised in real workflows — enriched with both known attributes and observed behaviour.

This closed “context loop” approach connects what is known about components with how they actually behave in practice, helping teams make better decisions about what to build with, what to allow and what to run in production.

We spoke to the CEO for more…

CWDN: How does the Trust Context Engine handle trust signal conflicts when the MCP Trust Registry’s curated metadata contradicts real-time runtime behaviour captured by BlueRock sensors?

Tinker: We treat conflicts between expected and actual behaviour as a signal that is important to flag to customers, which then drives either an alert or a prevention mechanism blocking the runtime execution. Additionally, metadata in the registry is continually updated and policies can be adapted to address any conflicts. It is worth noting that the context itself provides valuable insight into tool execution from a visibility perspective as well.

CWDN: What latency overhead do BlueRock sensors introduce at the tool invocation layer, and how does that impact time-sensitive agentic workflows operating across multiple chained MCP servers?

Tinker: Bluerock’s overhead is minuscule for both tool inspection and runtime sensors. Our platform captures execution-level signals without disrupting the workflows teams are trying to run. BlueRock operates at the point where tools, MCP servers, and downstream actions are invoked (rather than operating as a man-in-the middle proxy that introduces overhead and latency), thereby preserving context across the full action path without heavy inspection overhead.

CWDN: When Trust Context signals feed into CI/CD pipelines, what’s the schema or API contract developers use to programmatically evaluate MCP server trust scores against custom promotion policies?

Tinker: The MCP Trust Registry provides structured trust data – things like ownership, tool classification, MCP security findings, server risk – as well as a generalised trust score. Developers trigger CI scans upon code promotion and the Trust Registry scanning engine accesses the code via repo connectors. The results and Trust Context data is exposed via API and MCP Server for consumption by developers. Customer DevOps team authors their own CI/CD rules based upon their consumption of the different Trust Context signals to make their own promotion logic, which depends heavily on their industry and agentic workflow.

Developers can get started immediately to run agents in BlueRock, see how execution unfolds and use that visibility to make better decisions about what to use and what to deploy.