GreenOps - Revenium: To optimise AI for cost and sustainability, start with outcomes

This is a guest post for the Computer Weekly Developer Network written by Daithi Walsh, director of Product at Revenium.

With decades of hands-on experience across software engineering, cloud infrastructure, AI, enterprise SaaS, DevOps, solution architecture and product management, Walsh’s work has included him operating in regulated environments such as payments and US EPA compliance.

Walsh writes in full as follows…

GreenOps and FinOps are tightly coupled: reducing environmental impact and controlling cost are frequently achieved through the same measures. Traditionally, teams achieved both by measuring and optimising idle cloud compute, orphaned resources, oversized instances and inefficient regions. Those approaches worked because the waste is deterministically measurable and testable – making optimisation cycles scientific.

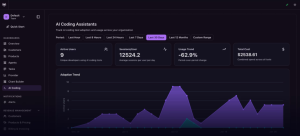

To optimise, you first need to measure.

Modern AI, however, adds a non-deterministic dimension: operations depend on variable inputs and can be subject to hallucination, outcomes are often subjective and qualitative, frustrating the measure-optimise cycle that works for cloud infrastructure.

IDC projects that by 2027, large enterprises will face up to a 30 percent rise in underestimated AI infrastructure costs. The IEA projects that data center electricity demand will more than double by 2030 and cooling infrastructure already places significant demands on water supplies, raising urgent sustainability questions.

The question is: how do you optimize for cost and sustainability at the pace of AI?

Current approaches don’t go far enough

The FinOps Foundation has codified effective practices for managing cloud costs: tag resources, attribute spend, eliminate waste. It is also codifying AI-specific metrics: cost-per-inference, token consumption and time to achieve business value. These are useful at the operation level – they measure cost and throughput, not outcome quality. But AI changes where waste hides. AI produces value through chains of operations — inference, retrieval, tool calling, routing decisions and external services – not at the atomic level. An error at any stage can trigger downstream processes that were never necessary.

Provider bills show AI costs in fragments: model APIs, cloud runtime and external platform fees. Line items can be distributed across platforms and providers, missing vital context: which chain generated the activity, what business process initiated it, what business value it produced and whether it was profitable.

Much like traditional cloud infrastructure, it is only by considering the full architecture and its impact on measurable outcomes that optimization is possible. The requirement is practical: an audit trail that links the intent with the outcome achieved.

The cost of getting it wrong

Consider a multimodal onboarding process. A customer submits documents with a clear intent: get assessed and receive an offer. The system extracts data from uploaded documents, checks external platforms for fraud signals, runs a credit assessment and produces a recommendation. If the system misclassifies employment status early in the chain, every downstream step runs against the wrong profile. The credit assessment applies the wrong risk model, the offer is inappropriate and the reviewer’s time is spent on a case that was already compromised.

Intent and outcome are disconnected, but nothing flags it. The customer receives an inappropriate offer and either rejects it outright, losing trust in the platform, or it progresses to staff for final sign-off, wasting their time on a compromised case.

The loss is not just financial. Every wasted operation carries a carbon cost. According to the IEA, energy use across AI tasks can vary by orders of magnitude. A failed chain consumes compute, energy and reviewer time while delivering a negative outcome: the organisation would have been better off if the customer had never interacted with the platform. An idle VM waits to be discovered. A misclassified chain is harder to spot. The organisation is absorbing wasted spend, unnecessary carbon and reputational damage and without outcome-level attribution, no one knows.

Beyond single transactions, AI systems do not stay still. Teams swap models, fine-tune configurations, change providers and adjust prompts over time. Each change can shift outcomes in ways that are invisible without attribution. A workflow that performed well last quarter may be silently degrading today and traditional health metrics will not surface it. Without versioned outcomes, maintenance becomes guesswork and agents become authorised tools with ungoverned resource use.

Start with outcome-level thinking

The starting point is defining the outcome: what initiates a chain of operations and what does success look like? From there, teams can group the operations that serve that outcome, whether built from prompt chains, retrieval pipelines, or multi-agent orchestrations. Wrapped in agentic harnesses with explicit success criteria, these become auditable, versioned, maintainable and reusable. A signup chain can serve renewals with minimal adjustment. This is the missing middle between atomic metrics and business outcomes.

Granular monitoring tells you what each operation cost. Outcome-level observability lets you ask the hard questions: was the operation necessary? Did the customer accept the offer, or did staff find the agent’s output useful? For internal teams, that feedback closes the loop between intent and outcome.

Observability by design & plan for change

Teams need operation-level visibility as a foundation. The next tier is defining explicit value criteria at the outcome level: what quality baseline must be met, when should the system escalate and at what cost does the outcome stop being worthwhile? Controls like retry caps, approval gates and budget thresholds enforce guardrails once expected ranges are understood.

With that foundation in place, optimisation becomes practical. The goal is not necessarily the cheapest model or the lowest spend — it is the right level of investment for the outcome. Some steps may not need an LLM at all: traditional code or a rules engine can produce the same result at a fraction of the environmental impact, while also reducing compliance exposure. Where quality matters, investing in a more capable model can reduce downstream failures. Teams can also focus on carbon-specific initiatives that account for the full environmental picture. Each decision compounds: fewer AI operations, configurations optimised for carbon, lower costs and reduced regulatory exposure.

This is sustainable software design applied to AI: choosing the right tool for each step. An outcome-based view lets teams make that call and test it, comparing configurations and providers against measured outcomes.

Together, these tiers create an observability foundation for scientific reasoning about what works and what does not. That foundation makes controlled maintenance and evolution possible: when outcomes degrade, teams can trace the cause to a specific change rather than guessing. When the system needs to evolve, teams can do so scientifically.

Outcome-linked attribution

Provider-side changes are inevitable: models get deprecated, behaviour shifts subtly between versions and outages can degrade inference quality without warning. Value can shift even when you change nothing. Outcome-linked attribution makes these shifts visible and diagnosable, turning what would otherwise be silent degradation into a traceable event that teams can triage and resolve.

Chain-level understanding also supports governance. The EU AI Act classifies AI systems by risk level, with high-risk systems requiring human oversight and auditability. When operations are individually attributable, teams can map which steps carry regulatory risk and apply oversight where it is needed — and every step where AI is eliminated is one less touchpoint requiring classification.

It starts with outcomes. Connect every chain of operations to the outcome it delivers — auditable, version-controlled and shared across engineering, finance and sustainability teams. If the industry wants to scale AI responsibly, every production workflow needs a measured outcome.