Confluent Intelligence engineers secure agent connectivity for real-time data

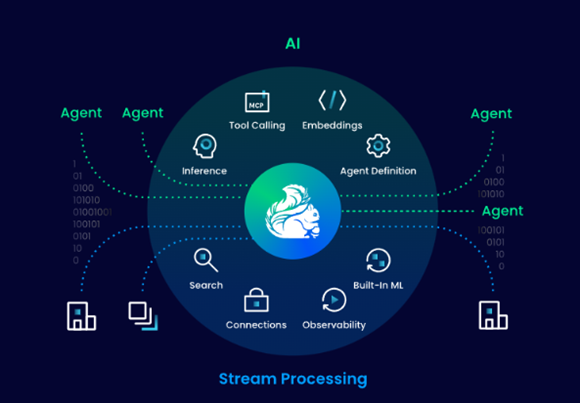

Data streaming specialist Confluent is working to ensure it engages with the need to surface artificial intelligence functions across and through its entire platform of services.

The company’s new Confluent Intelligence capabilities connect AI agents and help data science and software engineering teams uncover more accurate, intelligent data analysis.

Confluent’s Streaming Agents use the Agent2Agent (A2A) protocol to trigger and coordinate external AI agents using real-time data streams, making it easier to connect AI systems across an enterprise.

Data exchange negotiations

As every good developer knows, Agent2Agent (A2A) protocol enables autonomous AI agents to communicate and “negotiate data exchange” in a direct way using standardised technology frameworks so that a variety of different AI systems can collaborate without human intervention.

Aiming to keep things safe and secure, Confluent confirms that it has also employed multivariate anomaly detection in its new offering. This technology examines multiple metrics to automatically spot unusual patterns in data streams, which is intended to help teams prevent issues before they cause outages or downstream impacts. In practice, multivariate anomaly detection models relationships between application or data service features to detect observations that deviate from what is defined as “normal joint behaviour” inside complex, high-dimensional datasets… it does this by detecting subtle correlations or patterns that may initially appear normal when taken individually… but indicate a failure or breach when viewed as one complete sum of information.

Traditional anomaly detection often analyses metrics in isolation and is frequently restricted to batch-based analysis on historical data. Relying on simple statistical baselines, these systems are highly sensitive to noise, spikes, and bad data. Without context, they can generate false positives, and they typically surface issues after they’ve already impacted the system.

Context-aware AI systems

Confluent’s branded Multivariate Anomaly Detection feature is new function of its built-in Machine Learning (ML) Functions.

Together, the combination of Agent2Agent protocol and multivariate anomaly detection forms what Confluent says are capabilities that create intelligent context-aware AI systems that adapt as data, agents and business conditions change.

“If you want to be competitive, your AI can’t be looking in the rearview mirror,” said Sean Falconer, head of AI at Confluent. “You need a system of AI agents that work together and constantly learn and share insights in real time. Confluent Intelligence connects teams’ AI investments and systems no matter where they’re built – so AI can automatically react to live data, take action, coordinate systems, and escalate to team members as needed.”

Falconer and team remind us that organisations are increasingly turning to AI agents to automate decisions and handle more complex work. But as agents spread across tools and systems, most operate in isolation. If agents can’t communicate with each other or share context across a business, insights get trapped in silos and decisions are fragmented.

Confluent says its Streaming Agents technology addresses this whole challenge by connecting AI agents to real-time data with Anthropic’s Model Context Protocol (MCP) and to other agents with the A2A protocol.

“Together, they can continuously analyse information from agent frameworks such as LangChain, data platforms like BigQuery, Snowflake and Databricks to generate insights, then trigger enterprise AI platforms like ServiceNow and Salesforce workflows to take immediate action.

With A2A support in Streaming Agents, teams can build reusable AI agents as they feed existing agents and systems with fresh context from Confluent to asynchronously respond to events and take further actions.

Inter-agent communication

This technology allows users to unlock inter-agent communication and auditability so that they capture every agent action in an immutable log for auditability and replayability… and use Apache Kafka to orchestrate communication between agents and to reuse agent outputs across other agents and systems.

Streaming Agents acts as the orchestrator… and Confluent tells us that its platform works to ensure governance, security and end-to-end observability for all agent interactions.