Atlassian builds enriched AI‑native engineering with new functions in DX

Atlassian acquired DX around eight months ago.

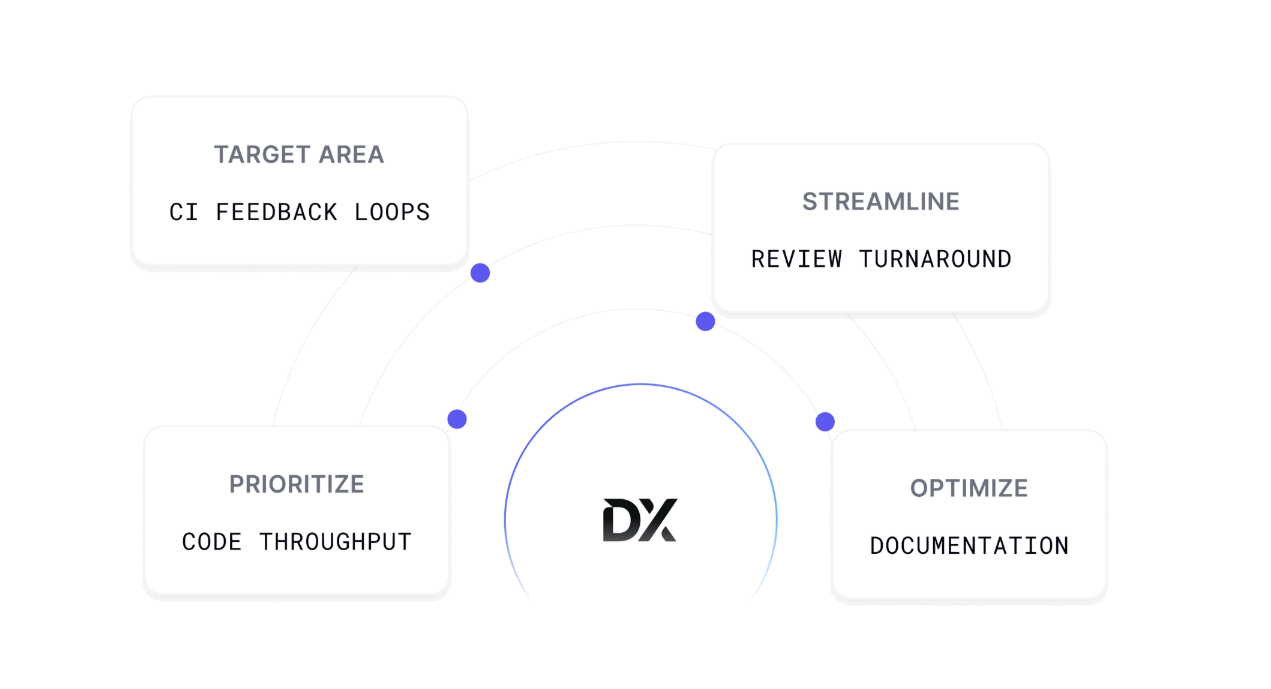

DX is used by enterprises to analyse the productivity levels of their software engineering teams are and identify bottlenecks slowing them down.

The technology also helps measure, benchmark and improve developer productivity through qualitative and quantitative data that is said to “unlock developer flow” i.e. keep programmers in a positive state of momentum

When customers ask: How do I know if my engineering teams are productive? Where should I be putting my AI dollars? And how do we measure the ROI of AI investments? DX has the answers, so says Atlassian.

Based in Salt Lake City, Atlassian founder and CEO Mike Cannon-Brookes said at the time that DX has done a good job understanding the qualitative and quantitative aspects of developer productivity and turning that understanding (and level of insight) into actions that can improve enterprises.

The company reminds us that AI is changing how software engineering teams work faster than most organisations can adapt. Coding assistants are now part of the daily workflow, agents are starting to own tasks end-to-end, and the way we deliver software is being redefined in real time.

New questions on AI

With that shift, engineering leaders are facing a new set of questions.

Are these tools actually improving outcomes? Where are they falling short? Which teams are seeing value… and which aren’t? How do you report the ROI of an investment that touches every developer, every day, in ways that are hard to see?

To help leaders answer these questions, DX recently released four major new features and products to help engineering teams navigate the shift to AI-native software delivery. They include an AI chat interface to interact with your data, visibility into how much code is generated by AI, proactive alerts based on SLAs, and a new way to measure agent effectiveness and where to invest to close gaps.

New feature breakdown

AI Code Insights for deeper insight into AI-generated code are now available. As AI coding tool adoption matures, Atlassian says that the key question for engineering leaders is whether AI is being used effectively and if the investment is justified.

“Leaders face critical questions that are difficult to answer with existing tooling. How are developers actually using these tools, and what are they spending on tokens? Are teams providing AI with sufficient context and clarity to generate useful output? Are codebases structured in ways that AI can effectively navigate? AI Code Insights gives organisations real-time visibility into how AI tools are being used and where they’re falling short,” noted the company, in a press statement.

The technology is comprised of a number of main reports and it will highlight AI-generated code attribution – so that developers can see exactly which commits and pull requests (PR) contain AI-generated code.

The AI Code Overview report

This allows developers to explore critical SDLC metrics “bucketed” (i.e. segmented) by the percentage of AI in each PR, so teams can start to understand how AI-generated code moves through your development process differently than human-written code.

Agent Experience insights

This technology surfaces insights directly from AI agents about where they’re hitting bottlenecks during a session: missing context, ambiguous instructions, structural barriers in the codebase.

“This is a fundamentally new signal: rather than inferring effectiveness from output, a developer is hearing from the agent itself. The Agent Experience report provides an overall agent effectiveness score across an organisation, filterable by team, with a detailed per-session view,” states Atlassian.

AI Dollar Impact

Teams can then tie it all together with the AI Dollar Impact report, which translates AI tool investment into an estimated net dollar figure. Rather than debating whether AI is “worth it” in the abstract, leaders can see a concrete financial picture of what their investment is producing, making board-level reporting and budget conversations significantly easier.

AI Code Insights is powered by a lightweight, self-hosted CLI daemon that runs on developer machines across macOS, Windows, and Linux. It supports all major AI coding tools.

“Over the past decade, the industry has learned that developer effectiveness is not primarily about the individual. It’s about the environment: short feedback loops, clear context, well‑structured systems, and minimal friction. Organisations that invest in these conditions consistently outperform those that don’t. Agents are no different,” detailed Atlassian, in a product update blog.

An agent working against a well‑documented, modular codebase with clear instructions will dramatically outperform the same agent working against a monolith with sparse documentation and ambiguous prompts. The difference is less about the model and more about the conditions it operates in.

How to measure developers & agents

DX was founded on a simple principle: the best way to measure developer effectiveness is to go directly to developers. The company says that it has “applied that same idea to agents” and Agent Experience captures what agents encounter in their work so organisations can systematically improve the conditions that determine whether agents succeed or fail.

Unlike human developers, who require surveys and sampling to surface friction, agents already have full visibility into their own context. They know when documentation is missing, when instructions are ambiguous, and when a codebase is difficult to navigate.

Agent Experience captures this signal across three components, assessed per session – each scored and accompanied by a qualitative comment from the agent explaining what it encountered:

Atlassian and the DX division say that platform teams can use its technology to identify where to invest in AI readiness: improving documentation, adding context files, and restructuring code.

Engineering leaders use it as a lens on organisational readiness for agentic delivery. Individual developers use it to improve how they prompt, structure repos, and guide agents session by session.