Drone kill communications net illustrated

The illustrations add credibility to a legal challenge begun last month over a 2012 contract BT won to build the UK branch of the system – a fibre optic network line between RAF Croughton in Northamptonshire and Camp Lemonnier, a US military base in Djibouti, North Africa.

British officials had been slow to finger the BT contract under human rights rules because they said there was no evidence to suggest the UK connection was associated with US drone strikes, let alone any that had gone wrong.

There is however clear evidence that the UK connection is part of a global intelligence and weapons targeting network that operate US drone missions like a hand operates a puppet.

The network was meant to make drone weapons targeting more accurate, and catch fewer innocent people in the cross fire.

But this “network-centric” targeting also became the means of a chilling new type of warfare called targeted killing: computer-driven, intelligence-led, extra-judicial assassinations of suspected terrorists like those who kidnapped school girls in Nigeria and massacred shoppers in Kenya.

The UK connection was part of this because under the targeted killing programme, the network is the weapon.

Designed to be utterly discriminate but in practice not completely accurate, it had according to the Bureau of Investigative Journalism accidentally killed hundreds of civilians in 13 years of drone strikes on insurgents in fractured states in the Middle East, Asia and North Africa.

The strikes have all but ceased, and the mistakes reportedly led the US to reign in the programme. But the role of the UK connection remains a burning question.

It will not only determine the outcome of a judicial review being sought in the UK by a Yemeni education official whose civilian brother and cousin, Ali and Salim al-Qawli, where killed by a drone strike on their car, in the Yemeni village of Sinhan on 23 January 2013.

It will force the UK to face its part in the killing programme. And it will illuminate a frightening growth in the combined power of military and intelligence services: to use the power of domineering surveillance to feed systems of automated targeting and killing.

Network killing

The mechanism of net-centric warfare makes the idea that the UK connection has not facilitated US drone strikes absurd.

The network made targeted killing possible. The network was also the basis of the mechanism that drove the actual strike operations. It carried the intelligence that selected the targets. It ran the software that directed the operations. It incorporated the drones that carried out the strikes.

The drones do not exist as separate entities called in to finish the job. The drones are nodes on the network. They are a part of the network. The network is the weapon.

The US has been building its network up to drive the systems and weapons – and particularly the drones – that support its strategy of network-centric warfare. The UK connection is part of this strategy.

Drones rely on the network like trains rely on tracks, like puppets rely on strings. The network gives the drones their directions, distributes their surveillance, targets their weapons.

Network map

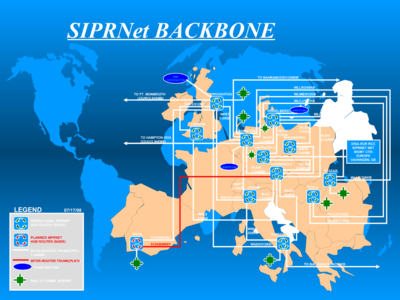

The blue map at the head of this article shows a map of the fibre-optic core of this global network, the Defense Information Systems Network (DISN) as it stood in 2004.

Showing the European branch of the network, the blue map depicts RAF Croughton, in Northamptonshire, as a major junction of the DISN – then essential for carrying classified military communications for the Secure Internet Protocol Router Network (SIPRNET).

Showing the European branch of the network, the blue map depicts RAF Croughton, in Northamptonshire, as a major junction of the DISN – then essential for carrying classified military communications for the Secure Internet Protocol Router Network (SIPRNET).

It shows how Croughton is connected to Landstuhl/Ramstein in Germany, the regional hub for the Stuttgart headquarters of US Africa Command (US Africom). It shows Croughton also links to Capodichino, the communications hub in Naples, Italy, where the US Navy has its European and African command centres.

Capodichino, like Landstuhl/Ramstein, connects on to Bahrain, the base for US Central Command (Centcom) in the Middle East.

The blue map pre-dates the the UK connection to Djibouti, which BT was contracted to provide in October 2012.

The contract specified a high-bandwidth line between the UK and Capodichino (effectively an upgrade), and then an extension to Djibouti, where Camp Lemonnier was having bandwidth problems.

When the blue map was published in 2004, the US military was working intensely to turn the DISN into the global surveillance and weapons targeting system it is today.

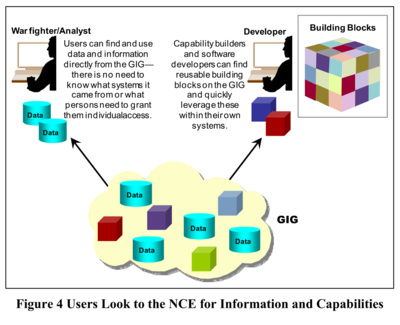

Their efforts turned the DISN into the backbone of a more extensive Department of Defense network called the Global Information Grid (GIG).

Drone net

Scientists and engineers from places such the Massachusetts Institute of Technology Lincoln Laboratory (MIT-LL) and the National Security Agency (NSA) modeled the GIG on the internet. It was a network of networks like the internet.

They joined the DISN with satellite and radio to form a single, seamless network. The US Department of Defence then strove to plug every device into it – every vehicle, every system, every drone – to form one all-encompassing net.

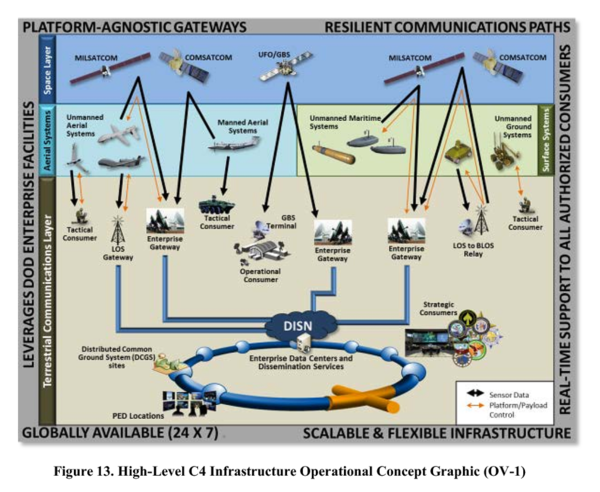

Drones needed the DISN to carry their control signals, as illustrated in the concept diagram above, from the US Department of Defense Unmanned Systems Roadmap 2013-2038.

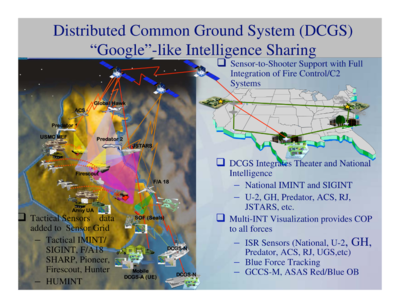

The diagram illustrates how defence and intelligence agencies rely on the DISN as well, to gather data drone sensors such as video and infra-red. The DISN carries drone data to systems such as the Distributed Common Ground System (DCGS), the common store of imagery intelligence for US military and intelligence agencies.

Satellite connection

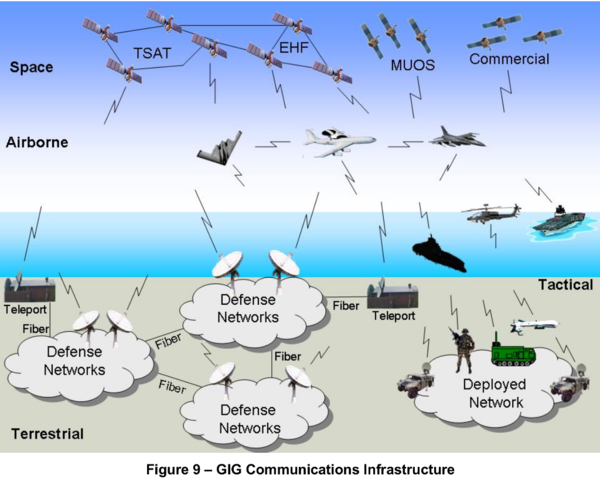

The DISN/GIG became more essential to drones as demands for their dense imagery intelligence outgrew the satellite and terrestrial network’s ability to deliver it.

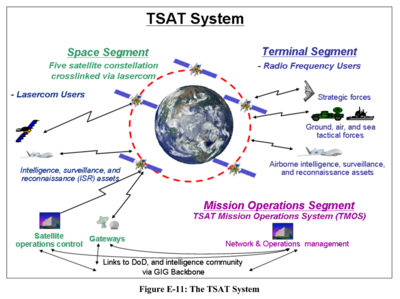

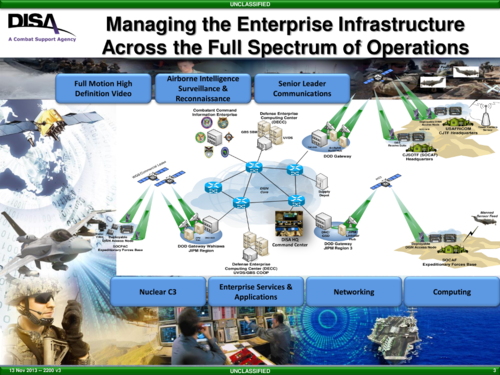

The US Under Secretary of Defense used this diagram in 2008 to illustrate how military and intelligence agencies used the GIG to communicate via satellite with drones and deployed forces.

The US Under Secretary of Defense used this diagram in 2008 to illustrate how military and intelligence agencies used the GIG to communicate via satellite with drones and deployed forces.

High-bandwidth satellite constellations were part of the plan. The one shown (TSAT – Transformational Satellite) was later supplanted by Wideband Global Satcom (WGS).

Teleport

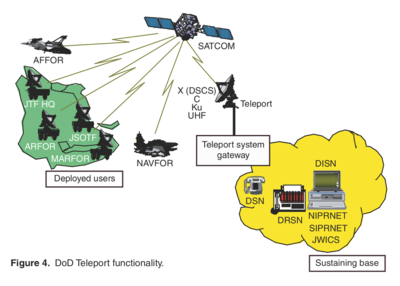

The DISN was connected to satellite constellations through antenna called Teleports, illustrated on the left by Johns Hopkins University Advanced Physics Laboratory.

The US subsequently built Teleports in eight locations: Bahrain; Wahiawa, Hawaii; Fort Buckner, Okinawa, Japan; Lago Patria, Italy; Landstuhl / Ramstein, Germany; Guam, Philippines; Camp Roberts, California; and Northwest, Virginia.

Internet radio

Internet radio

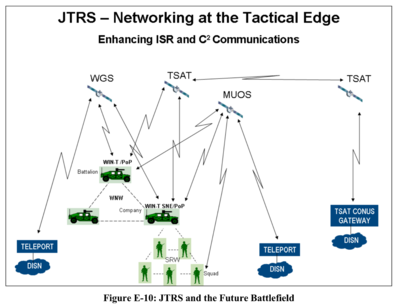

Deployed forces were also connected to the GIG, with a radio system written in software communicating using the internet protocol.

The Joint Tactical Radio System (JTRS) was developed by Massachusetts Institute of Technology Lincoln Laboratory (MIT-LL), a uniquely, wholly military-funded university department that in 2004 was responsible for developing major components for the GIG and surveillance and weapons targeting technologies that would run over it.

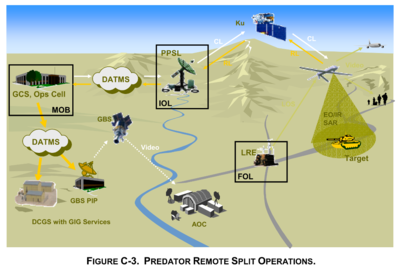

Predator

Predator

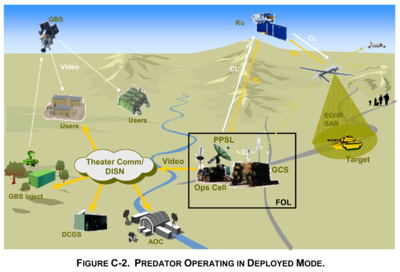

The Predator strike drone relied on the GIG/DISN to target its weapons, as illustrated in the US Department of Defense Unmanned Aircraft Systems Roadmap 2005-2030.

There were two ways of controlling a Predator: from a local station or one far away. Both relied on the GIG/DISN.

When a remote pilot housed with deployed forces in the theatre of operations (FOL – Forward Operating Location) did the controlling, the drone relied on the DISN to “reach back” to core military computer systems essential to its mission.

The most essential computer system was the Distributed Common Ground System (DCGS).

The DISN and DCGS were also essential in the other primary Predator control mode.

In a Remote Split Operation, the Predator would be launched from a “line-of-sight” control station near to its mission. But control would pass over to a remote pilot back in a fixed military base, such as Nellis Air Force Base in Nevada.

The drone’s control signals would be routed over the DISN to a Predator Primary Satellite Link (PPSL). Having pre-dated development of the GIG, the Predator used proprietary network technology and outmoded, asynchronous transfer mode (ATM) communications. This was handled by the DISN Asynchronous Transfer Mode System (DATMS).

But the old Predator comms systems were a hindrance to the GIG strategy. They were not internet-enabled. That meant they couldn’t be assimilated into the GIG.

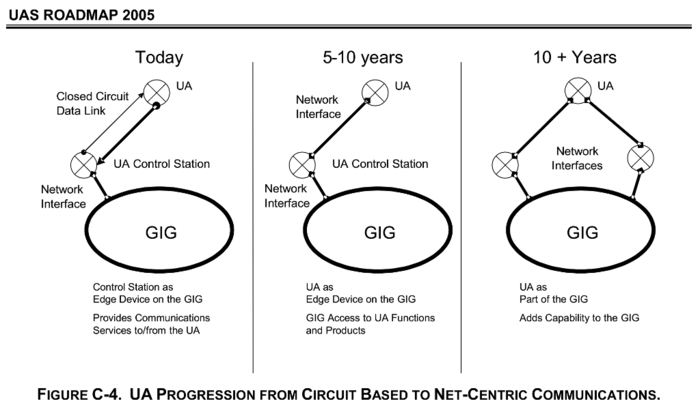

The aim of the GIG was for every sensor, every weapon, every comms system and every software program to operate using the internet protocol. Any military resource would then be available for control or observation, for attack or just for intelligence, to anyone with access to a GIG terminal anywhere in the world, in real-time.

The difference between drones communicating over DATMS and drones communicating over the internet-enabled GIG/DISN was like the difference between communicating via walkie talkie or running apps on your smartphone.

DoD had a 10-year plan get around the problem by gradually upgrading its drone comms infrastructure. The first step would connect drones to the GIG by turning their satellite links into GIG gateways. That was to be done by around about now. They would act like they were an integral part of the network.

DoD had a 10-year plan get around the problem by gradually upgrading its drone comms infrastructure. The first step would connect drones to the GIG by turning their satellite links into GIG gateways. That was to be done by around about now. They would act like they were an integral part of the network.

The drones would ultimately become internet-enabled themselves. They would communicate as internet nodes. Their on-board internet routers would use any spare bandwidth to route other people’s GIG traffic. They would become part of the network.

By the time the Department of Defense Unmanned Systems Integrated Roadmap FY2013-2038 was published in December, US drones were operating in the way illustrated in stage 2 in the diagram above.

Drones were using network gateways to get their command instructions over the DISN, it said. The DISN likewise disseminated the surveillance data they picked up on their missions.

The power of the GIG plan would follow when every drone, soldier, satellite, ship, truck, gun and so on was sharing their intelligence and surveillance sensor data over it, as illustrated in the concept diagram above, from the Department of Defence’s 2007 GIG Architectural vision.

The power of the GIG plan would follow when every drone, soldier, satellite, ship, truck, gun and so on was sharing their intelligence and surveillance sensor data over it, as illustrated in the concept diagram above, from the Department of Defence’s 2007 GIG Architectural vision.

They were all part of the GIG. They all communicated using the same internet protocol. They were all integral to the network.

A common communications protocol laid the foundation for common data formats and common software interfaces.

This was the transcendent aim of the GIG. It allowed military assets to be available on the network as building blocks. The GIG would be greater than the sum of its parts.

This would theoretically make every chunk of intelligence data, every surveillance camera, every weapon, every software system as a building block on the GIG.

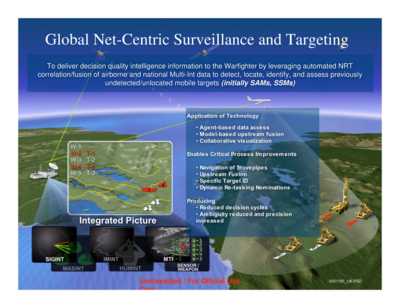

Net targeting

That was the basis of net-centric warfare – making everything available as a software service on the military internet.

Its most characteristic application was net-centric targeting.

That involved combining different surveillance sensors and intelligence databases on the fly, to get an automated fix on a target.

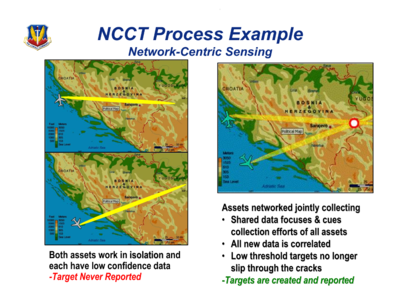

The power of net-centric targeting became apparent in simple tests that combined just two airborne surveillance sensors.

Each sensor had limited ability even to spot a target on its own, let alone get a fix, according to this graphic from a 2006 presentation by Colonel Tom Wozniak of US Air Combat Command.

The US Network-Centric Collaborative Targeting System (NCCT) takes sensor readings with a middling probability of making sense to a target computer, and combines them to create high-probability fixes where they match.

The NCCT became operational in 2007 after years of development in collaboration with the UK, according to DoD statements to Congress.

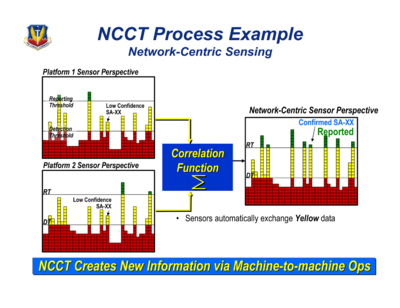

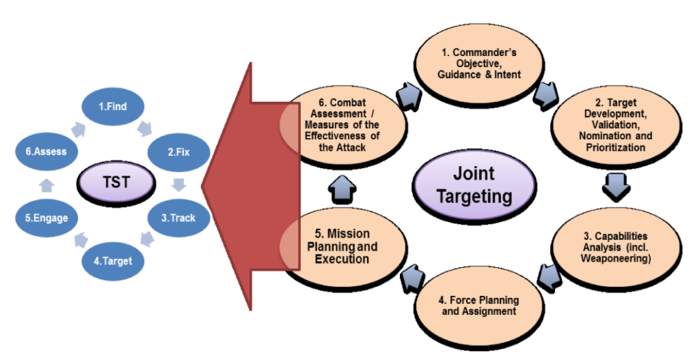

The pre-eminent application of net-centric targeting is the one that made the US targeted killing program possible: time-critical, or time-sensitive targeting.

The pre-eminent application of net-centric targeting is the one that made the US targeted killing program possible: time-critical, or time-sensitive targeting.

The graphic above shows how it usually takes hours for military personnel to plan a strike.

They have to digest their battle plans for start – pore over maps and work out what’s where. Then they have to find their target. That means arranging for intelligence, reconnaissance and surveillance (ISR) sensors to hunt for it. They have to collate all their intelligence and analyse the data.

Then they have to calculate a fix, nominate targets to be attacked, prioritize among them, co-ordinate their operations, find suitable weapons platforms and get them to the target area, account for weather, choose the best route to the target, watch out for friendlies and, when the strike has been made, assess the damage.

Network-targeting promised to do all this in minutes by automating it.

Intelligence databases

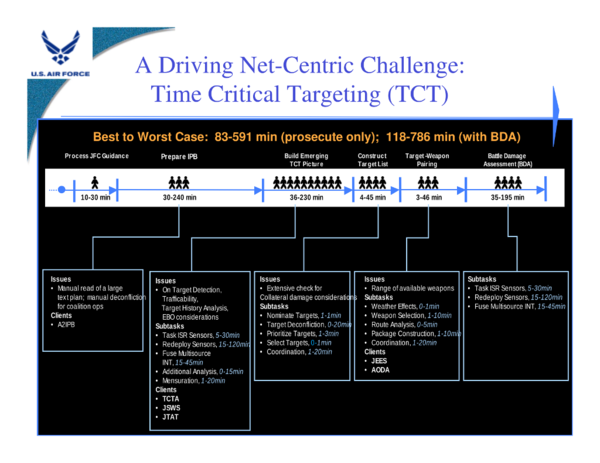

Net-centric targeting relies on a process called data fusion, or semantic interoperability.

That means storing your data in ways that can always be cross-matched.

Not just military data. Net-centric targeting developers wrote civil databases into their plans too, such as immigration databases and feeds from civil intelligence agencies.

Automated targeting

Combined with algorithms that watch for target signatures, this creates the means to spot targets on the fly.

And it creates the means to spot targets as small and as fleeting as people. And to kill them within minutes, as illustrated in the diagram above, published in 2007, the year the US Network-Centric Collaborative Targeting System (NCCT) went into operational use, by Computer Technology Associates (CTA), a defence and intelligence systems contractor that helped develop the system.

The example describes a target signature: an algorithm tells the targeting system that in the event of an emergency it should look out for a particular person, known to the Central Intelligence Agency (CIA) as “target ID 1454”.

The targeting system keeps watch for them with its Blue Force and Red Force Tracking systems. The military uses these to trace the movements of those they’ve classified as goodies and baddies.

The targeting system keeps track of immigration and airport databases as well. In the example, somebody on the Red target list (the general hit list) has popped up in the immigration database.

It checks to see if they match against CIA records. They do. And they match against the CIA file with the same target ID as specified in the target algorithm: “target ID 1454”. The targeting system sends geographical co-ordinates to people in green uniforms.

Time-sensitive targeting

This sort of computer vigilance, combined with networked intelligence, threw up new targeting possibilities.

The US started building common surveillance systems with its partners in the North Atlantic Treaty Organization (NATO). The more sources of intelligence they had, more targets they could see.

NATO usually took days to plan a strike against even a fixed target. If it could do that it minutes, it could spot targets that were too elusive before.

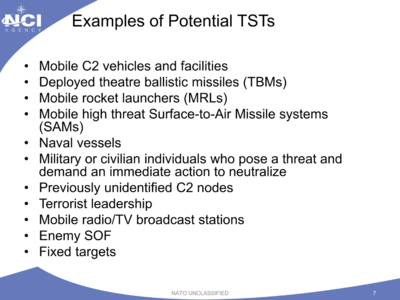

These Time-Sensitive Targets (TSTs) could be threats that emerged so quickly that they had to be attacked within minutes if they were to stopped.

These Time-Sensitive Targets (TSTs) could be threats that emerged so quickly that they had to be attacked within minutes if they were to stopped.

Or they could be “lucrative” targets that appeared in the surveillance net only fleetingly, and would escape if they weren’t attacked quickly.

“TST gives friendly forces the option of striking targets minutes after they are identified,” said this presentation by NATO’s chief scientist in 2013.

NATO targeting

NATO targeting

The US formed a coalition to develop a web of NATO net-centric targeting systems.

It would get target intelligence on the fly from surveillance gathered by any number of NATO countries that happened to have forces, sensors or databases with something to add to the kill equation.

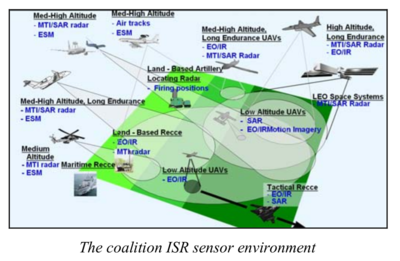

The Multi-Sensor Aerospace-Ground Joint ISR Interoperability Coalition (MAJIIC) worked on making NATO ISR sensors produce data in the same formats.

MAJIIC aimed to make innumerable surveillance platforms compatible: electro-optical (EO), infra-red (IR), synthetic aperture radar (SAR – high resolution video or still images), moving target indicators (MTI), and Electronic Support Measures (ESM – electronic emissions).

Their aim is what US strategists call “dominant battlespace awareness” – having more eyes and ears feeding more situational awareness back into the network than anybody else.

Afghanistan strike

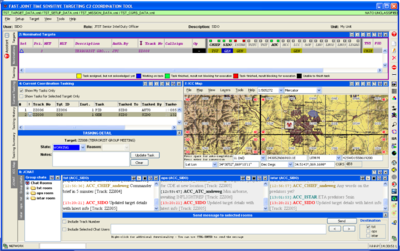

NATO’s chief scientist gave a recent example of net-centric targeting using its own TST tool last year.

The screen shot demonstrates a NATO strike against armed opponents of its military invasion in Kabul, Afghanistan.

It shows a map of Kabul with the location of the targeted people, as displayed in its TST tool, called Flexible, Advanced C2 Services for NATO (Joint) Time Sensitive Targeting (FAST).

This is the view that would appear on the computer screen of an intelligence officer, perhaps at a desk in Djibouti, Bahrain, Stuttgart, or Tampa, Florida.

The intelligence officer has named his target “Terrorist Group Meeting” and given a track identification code “ZZ008” to distinguish it from other targets in the system.

The software supports internet chat between military personnel overseeing the operation. This is the sort of communications handled by SIPRNET over the DISN. The FAST tool handles multiple target tracks at the same time.

An intelligence chief and another Senior Intelligence Duty Officer (SIDO) are also in the system, pursuing tracks on targets “ZZ004” and “ZZ005”.

“Any word on the Predators yet?” says a message from a chief of Intelligence, surveillance, target acquisition, and reconnaissance (Istar).

“ETA predators 5min,” he is told.

Just a minute before, Air Traffic Control sent a message saying an aircraft had been launched against another track ID, “ZZ006”.

Computerizing weapons targeting involved breaking it up into a series of steps.

It was systematized, as business functions like purchasing and manufacturing were when they were computerized: where human actions were classified into distinct processes like ‘produce purchase order’, ‘send invoice’, ‘receive goods’.

Time-Sensitive Targeting is commonly known as the kill chain. This has six steps: find, fix, track, target, engage, and assess.

UK gizmo

UK gizmo

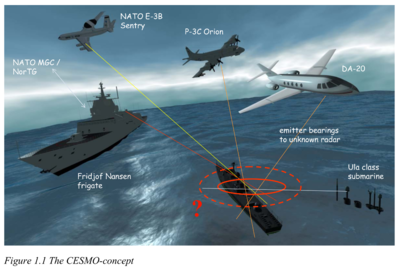

The UK developed a system that feeds NCCT with target data gleaned from conventional signals intelligence.

Called Cooperative Electronic Support Measures Operations (CESMO), its target data is merged with other intelligence in NCCT.

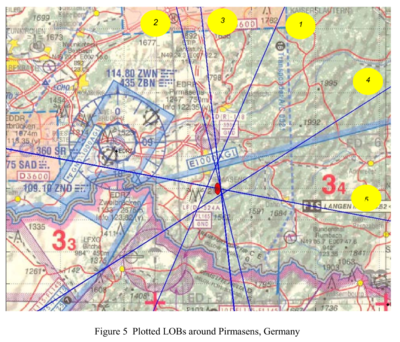

A NATO test of CESMO in 2005 produced this map, showing line-of-bearing (LOB) readings from ISR sensors.

Each single LOB is a single signals intelligence reading from a surveillance aircraft.

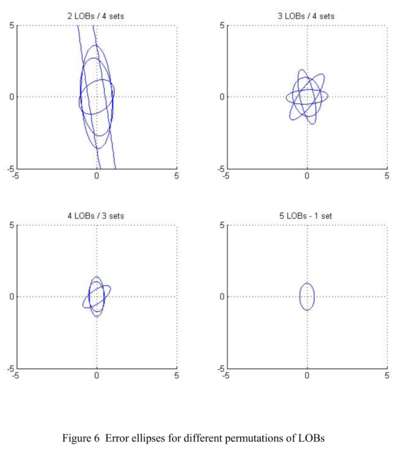

As expected, the test found a single reading was too unreliable to get a fix on an elusive target.

Even a single aircraft with two LOB fixes would have such a large “error ellipse” that it could not be used to target a weapon, said the NATO C3 Agency.

A target leaked electronic emissions for less than 30 seconds in NATO test TH05.

A target leaked electronic emissions for less than 30 seconds in NATO test TH05.

The area of a poor target fix – called the error ellipse – was 11km by 600m.

This was clearly far too large to risk an attack.

But with five sensors on the lookout, they got a fix.

“Most of the data pertaining to CESMO is classified,” said a paper by the NATO C3 Agency in 2007.

But, it said: “It is possible to show how [CESMO] can geo-locate targets that cannot be found by stand-alone operating ELINT or ESM platforms.”

Target intelligence

The US military stores ISR data in its Distributed Common Ground System (DCGS).

This is commonly described as the imagery intelligence store queried by US defence and intelligence agencies alike when planning operations and forming target tracks and fixes.

Allied nations use it too. As do targeting systems. It gives them a common view of the battle field and everything on it: common ground.

Common ground means the same surveillance from platforms such as drones, the same human intelligence, the same geo-location co-ordinates from target tracks, the same signals readings from CESMO, the same aerial photography and satellite images.

Drone operations

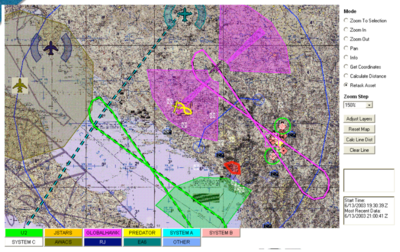

This screenshot purports to be taken from a DCGS tool in 2003 when the system was still in early development.

The image is of Croatia, Montenegro and Bosnia-Herzegovina on 13 June 2003, the date Croatian defence minister H.E. Zeljka Antunovic welcomed the opening of NATO expansion talks among former Yugoslavian states and Baltic countries at the spoke at the NATO Euro-Atlantic Partnership Council.

It shows the flight paths and surveillance nets of various aircraft including Global Hawk and Predator drones.

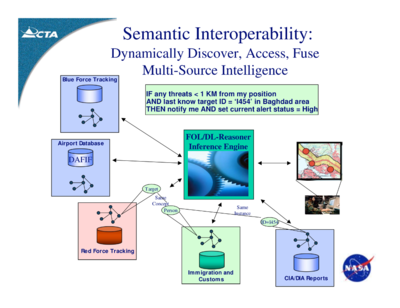

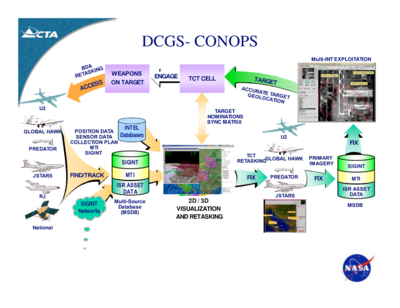

Time-Critical Targeting was part of the DCGS concept of operations (Conops), according to this 2007 presentation by net-centric developer Computer Technology Associates.

The image depicts ISR data stores being combined with civil and military intelligence databases to create time-sensitive target tracks and strikes.

UK drone intelligence

UK drone intelligence

The UK has access to this DISN data store as well.

Intelligence analysts at the Royal Air Force base in Marham, Norfolk, used DCGS imagery to direct UK operations in Afghanistan, said an RAF press release in 2011.

The RAF was building “real-time interoperability” with the DCGS, it said.

“Analysts receive feeds from the US Distributed Common Ground System (DCGS), which provides globally-networked Intelligence Surveillance and Reconnaissance (ISR) capabilities.

“This is the first time that the UK will have the capability to provide near real time imagery intelligence support to Afghanistan from the UK,” it said.

Murky area

National intelligence agencies use the DCGS as well, according to some descriptions of the system. That includes the CIA, which is reported to operate some of the controversial drone strikes.

The last 10 years have seen persistent references to the Intelligence Community as an influence and contributor to developments of the GIG, DCGS, net-centric systems and intelligence sharing. The likelihood of the Intelligence Community’s dependence on the DISN cannot be ignored.

Anup Ghosh, the former chief scientist of the Defense Advanced Research Projects Agency (DARPA), said in a 2005 speech that intelligence agencies were part of the DoD’s GIG vision. David Smith, a DISA consultant who worked on the DISN/GIG transformation wrote in 2006 that it was driven by and would serve both the DoD and Intelligence Community.

The US established a Unified Cross Domain Management Office (UCDMO) in 2006 to “address the needs of the DoD and the IC to share information and bridge disparate networks”, director Marianne Bailey said in a 2008 paper.

DoD told Congress in 2006 that tests of the GIG at the Naval Research Laboratory (NRL) would in 2007 include “end-to-end testing with DoD, Intelligence Community, Allied and Coalition activities”. The tests would incorporate JTRS, TSAT, Teleport, GIG Bandwidth Expansion, and Net-Centric Enterprise Services (NCES).

Rene Thaens of the NATO Communications and Information Agency said in a 2007 paper that signals intelligence sharing systems would get developed now that the Intelligence Community had discovered their benefits.

The US Under Secretary of Defence’s Joint Defense Science Board / Intelligence Science Board said in 2008 that investments by both the Intelligence Community and the DoD had created the GIG network infrastructure. It said excellent progress had already been made in “aligning meta-data from various sources across the Department of Defense and the Intelligence Community”.

DoD chief information officer (CIO) John Grimes formally committed in 2008 to ensure information and network situational data sharing with the Intelligence Community. The DoD and intelligence CIOs also formally agreed to recognize one another’s network security accreditations. DoD told Congress in 2009 that it was co-operating with the intelligence agencies on the development of its net-centric systems.

Mitre Corporation, a company that did software engineering on the DCGS, helped develop NATO ISR data standards and worked with MAJIIC, said in a 2009 paper about Net-Centric Enterprise systems that US intelligence agencies used them too.

DoD harmonised its IT standards and architectural processes with federal and intelligence agencies, and coalition allies in 2010, it told Congress in 2011. The alignment was done under the Command Information Superiority Architecture (CISA) programme, the Secretary of Defense office formed to develop the GIG architecture and net-centric reference model.

The US Navy told Congress in 2011 it was developing a system to fuse biometric data it took from people on ships it boarded with Intelligence Community counter-terrorism databases. DISA implemented an Intelligence Community system in 2011 that exposed DoD data to users with appropriate security clearance, it said in its GIG Convergence Master Plan 2012. It told Congress in 2012 the DISN carried information for “the DoD Intelligence Community and other federal agencies”.

USAF Major General Craig A. Franklin, vice director of Joint Staff, issued an order in 2012 specifying conditions for the Intelligence Community to connect to the GIG, and for IC systems to connect to “collateral DISN” systems. He charged the UCDMO with establishing “cross-domain” computer services between the DoD and Intelligence Community. The UCDMO simultaneously published a list of network services that would work across DoD and intelligence domains.

The National Geospatial Intelligence Agency (NGA) said in 2012 it had “aggressively” broken barriers to imagery intelligence data sharing between civil, defense, and intelligence agencies. The US Navy said in its 2013 Program Guide the next increment of its portion of the DCGS (DCGS-N) would “leverage” both DoD and Intelligence Community hardware and software infrastructures. It said upgrades on the Aries II aircraft, its premier manned ISR and targeting platform, would “enable continued alignment with the intelligence community”.

Teresa Takai, DoD chief information officer, ordered in 2013 that all DoD systems would be made interoperable with the Intelligence Community. She committed formally to agree meta-data standards with the Intelligence Community ICO. And she formally requested agencies and government departments including the CIA, Treasury, Department of Justice, NASA, and Department of Transport agree cyber security procedures for connecting to the SIPRNET by June 2014. The NGA said in its 2013 update to the National Imagery Transmission Format Standard (NITF/S) that developments had been driven in recent years by a need to share intelligence data between the DoD and Intelligence Community. It was developed in collaboration with DoD, Intelligence Community, NATO, Allied Nations, technical bodies and the private sector.

“Intelligence Community” is an official designation of 17 agencies by the US Director of National Intelligence that includes the CIA, Federal Bureau of Investigations (FBI), Department of Homeland Security (DHS), Treasury, Drug Enforcement Administration (DEA), Departments of Energy and State, coast guard, NSA, and intelligence agencies associated with each of the US military forces.

The progress of their net-centric integration appears from public records to have been long, arduous, partial, reluctant, ongoing, yet undeniable.

Even if the CIA has been averse to conducting its drone operations directly over the GIG/DISN, it is unlikely the DoD network has not carried intelligence and other data essential to its missions in Yemen and elsewhere.

Even if the CIA has been averse to conducting its drone operations directly over the GIG/DISN, it is unlikely the DoD network has not carried intelligence and other data essential to its missions in Yemen and elsewhere.

The CIA was directly associated with a more recent evolution of the DCGS.

Central Intelligence Agency

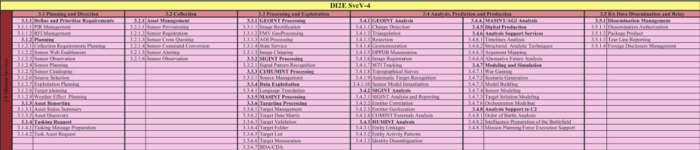

A more substantial computer framework for sharing data between defense and intelligence agencies and their international allies, called the Defense Intelligence Information Enterprise (DI2E), has subsumed DCGS.

At the heart of DI2E is the DCGS Integration Backbone (DIB), a set of data fusion services said in a 2012 Overview by the DCGS Multi-Execution Team Office at Hanscom Air Force Base, Massachusetts, to have delivered a system for the DoD and Intelligence Community to search, discover and retrieve its DCGS content. USAF characterised it as a cross-domain service.

DI2E delivered a plethora of cross-domain services for net-centric missions as part of the Department of Defense Architecture Framework in 2010, listed in the flesh-pink graphic above, which links to a sheet given to developers who intended a May 2013 DoD/IC “plugfest and mashup” at George Mason University, Virginia.

Sensor and target planning are included in the list of Mission Services on the sheet, a collection of over 150 net-centric software services called the DI2E SvcV-4 Services Functionality Description.

They also include SIGINT pattern matching, target validation, entity activity patterns and identity disambiguation for human intelligence (HUMINT), and intelligence preparation of the battlefield.

This is a defense intelligence initiative. That means it comes under the direct remit of the Defense Intelligence Agencies. But as always, it is described as for the benefit of both the DoD and the wider Intelligence Community.

This is a defense intelligence initiative. That means it comes under the direct remit of the Defense Intelligence Agencies. But as always, it is described as for the benefit of both the DoD and the wider Intelligence Community.

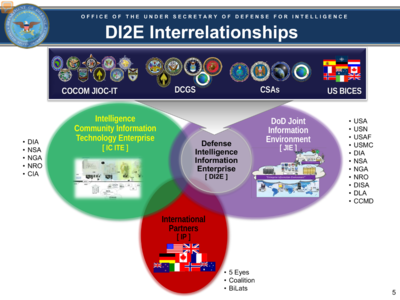

The Under Secretary of Defense for Intelligence published a diagram of the stakeholders in DI2E in a presentation last year.

D2IE was owned by defense intelligence. But the CIA and other intelligence agencies used it.

The US military was meanwhile reported to have stopped sending drones over Yemen, in April. The CIA was said to have continued, but from an erstwhile secret base in Saudi Arabia.

Yemen

Even when drone attacks on Yemen were reportedly launched from Djibouti, the picture was murky enough for UK officials to dismiss a complaint by legal charity Reprieve that the UK connection made BT, its contractor, answerable for resulting civilian deaths.

The conflation of military commands around Yemen was complicated. It was hard to point at a drone strike and say who launched it, from where, with comms directed down what pipe. The US wouldn’t say. BT had ignored the question.

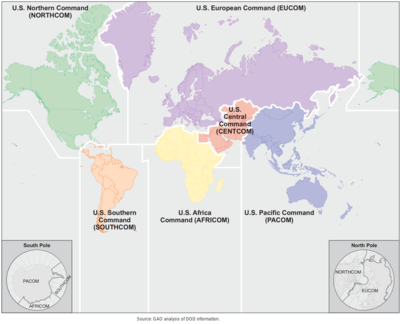

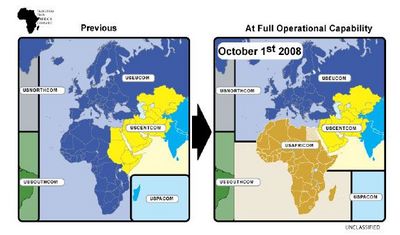

US Central Command (Centcom), the military group that invaded Iraq, ran Lemonnier until October 2008, when it handed control to US Africom.

US Central Command (Centcom), the military group that invaded Iraq, ran Lemonnier until October 2008, when it handed control to US Africom.

Centcom kept Yemen as an operational area. But its base in Bahrain was almost 1,000 miles away.

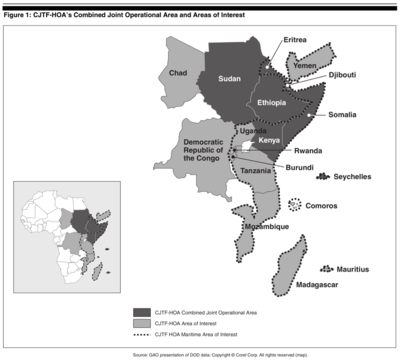

Africom kept Yemen as an “area of interest”. Lemonnier was separated from Yemen by a finger of water just 20 miles across, called the Bab-el-Mandeb straight. Reports continued to cite Lemonnier as a launch site of lethal targeting drone missions.

US Africom would not tell Computer Weekly what drone missions launched from Lemonnier. Not even whether they did. Nor what mission support it gave Centcom. Nor whether it did. Nor whether Centcom had continued operating from Lemonnier after command passed to Africom. Nor whether Africom carried out missions in Yemen under Centcom’s command.

US Africom spokesman Army Major Fred Harrell said a lot of assumptions were made about the drone strikes. But like the White House, he refused to clarify who, what, where, when.

But he did confirm that Centcom co-ordinated Yemen operations with Djibouti.

“Our area of responsibility borders that of Central Command and also US European Command,” said Harrell.

“So it’s safe to say that anything that occurs across what we call the seam between where our area of responsibility ends and where theirs starts, there’s always co-ordination between combatant commands on what goes on.

“We do co-ordinate with our neighbour combatant commands, such as European Command and Central Command,” he said.

This article has illustrated amply how such co-ordination is conducted over the DISN.

Earlier reports in Computer Weekly described how a 2012 DISN upgrade at Lemonnier coincided with the BT contract to extend the line from Croughton and a 2012 DISN upgrade in Stuttgart. And how an intelligence contractor was hiring analysts to work on targeting systems over the DISN from Stuttgart.

Drone video feeds

Drone video feeds

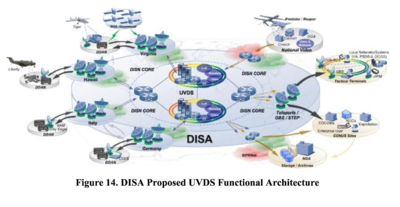

Upgrades including the UK connection have made the US network wide enough to yet another development in drone targeting and intelligence: real-time video feeds.

DISA’s Unified Video Dissemination Service (UVDS) takes live video streams from Predator and Reaper drones and transmits them via Teleports such as those at the DISN comms hubs in Naples and Landsthul and Bahrain.

UAV video gets streamed via the Teleports and over the the DISN, according to the graphic below, from the DoD 2013-2028 Unmanned Systems Roadmap.

The graphic illustrates how their imagery is thus stored in the DCGS, and in archives at the NSA. From a DISA presentation last year, it illustrates how the whole system depends on the DISN.

It shows drones and surveillance aircraft associated with Camp Lemonnier, otherwise known as Headquarters of the Combined Joint Task Force-Horn of Africa (CJTF-HOA) under US Africom.

It shows drones and surveillance aircraft associated with Camp Lemonnier, otherwise known as Headquarters of the Combined Joint Task Force-Horn of Africa (CJTF-HOA) under US Africom.

The drones feed their video streams via wideband satellite back to Lemonnier, as well as a nearby DISN trunk gateway – a Teleport.

DoD records occasionally state that its net-centric and DISN investments aimed to give simultaneous views of the battlespace to any personnel or commanders anywhere in the world.

This was for example one reason given for the DISN investment at Lemonnier. The idea was that it might help commanders at bases in different places like Bahrain and Djibouti, and commands with different headquarters in places like Stuttgart and Tampa, and perhaps even intelligence analysts in different domains, to co-ordinate their missions. Streaming drone video was a part of that.

Drone over IP

The DISN core, built with trunk lines like the one between Djibouti and the UK, provided the basis of this strategy, as of all the other net-centric services.

It would allow staff in different locations to use the same systems, to see the same intelligence, collaborate in the same operations.

<a href="https://www.computerweekly.com/blogs/public-sector/assets_c/2014/06/DISN%20in%20Current%20-%202012%20-%20UVDS%20Operational%20Architecture%20%3C%20DoD%20Unmanned%20Systems%20Roadmap%202013%20to%202038-140227.html" onclick="window.open('https://www.computerweekly.com/blogs/public-sector/assets_c/2014/06/DISN in Current – 2012 – UVDS Operational Architecture <img src="https://cdn.ttgtmedia.com/ITKE/cwblogs/public-sector/assets_c/2014/06/DISN%20in%20Current%20-%202012%20-%20UVDS%20Operational%20Architecture%20%3C%20DoD%20Unmanned%20Systems%20Roadmap%202013%20to%202038-thumb-600×420-140227.png" alt="DISN in Current – 2012 – UVDS Operational Architecture The DISN Core fibre network is hence at the centre of this network diagram showing how the Unified Video Dissemination Service (UVDS) operated in 2012.

The diagram shows video feeds running from drones, over satellite links and finally via Teleports, over the DISN.

The Teleports at Lago Patria, Italy and Landstuhl, Germany are shown distributing live video feeds to the DISN.

The Italian Teleport, on the DISN between the UK and Djibouti, was made capable of live drone video comms in May 2012, the year BT was contracted to make the UK connection, the diagram notes.

Published in December 2013, in the DoD’s 2013-2038 Unmanned Systems Roadmap, it shows how full-motion drone video is carried across the network to UVDS storage points, where they can be made available to users on SIPRNET.

The graphic has two distinct components: the part in green that gets the full motion video onto the network, and the part in red that makes the video available to military users and their systems. Both parts operate over the DISN.

DISN in this diagram links satcoms directed through Teleports with theatre communications for bases such as the one in Djibouti. It links those with the classified SIPRNET network that also runs over the DISN, and with UVDS systems operating from DISA’s regional computing centres, called Defense Enterprise Computing Centers (DECCs).

Network infrastructure

The network components in the diagram above match both those described in the official notice that described BT’s contract for the UK connection in 2012, and the US Navy’s Congressional budget justifications that described the same DISN connection upgrade as an operational need for Djibouti.

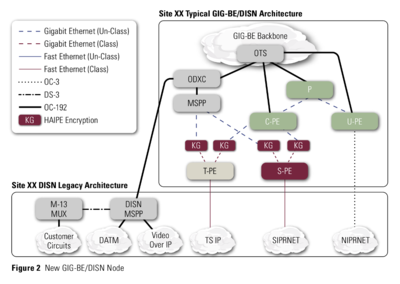

The key components are the MSPP (MultiService Provisioning Platform – a device to connect the DISN line at bases such as Camp Lemonnier) and HAIPE (High Assurance Internet Protocol Encryptor) encryption devices.

These were devices specified in US Congressional justifications for building the DISN into the GIG.

MSPP devices form the major junctions of the Global Information Grid (GIG), as illustrated in this graphic from a 2004 presentation by Frank Criste, then director of Communications Programs for the US Office of the Secretary of Defense.

MSPP devices form the major junctions of the Global Information Grid (GIG), as illustrated in this graphic from a 2004 presentation by Frank Criste, then director of Communications Programs for the US Office of the Secretary of Defense.

The diagram shows the GIG test environment most likely operated by NRL in 2004. It portrays components that would later be used to build the GIG in the real world.

The network infrastructure specified for BT’s UK connection was also illustrated in this diagram from an article in the summer 2006 DoD Information Assurance Newsletter.

It illustrates the GIG-Bandwidth Expansion programme, a scheme to upgrade the GIG for net-centric warfare, and to carry the imagery intelligence spewed out by burgeoning numbers of drones.

The new DISN infrastructure would include OC-192 fibre-optic cables, ODXC and MSPP network devices, and “KG”-class high-speed High Assurance Internet Protocol Encryptor (HAIPE) devices, all devices specified in BT’s contract for the UK-Djibouti connection, and all also matching US budget justifications for expenditure.

“With the expanded bandwidth provided by GIG-BE, DISA can address high-capacity applications (e.g., imaging, video streaming) and provide a higher degree of network security and integrity,” said David Smith, the DISN programme manager who wrote the article.

“The GIG-BE program is the first of its kind to bring high-speed High Assurance Internet Protocol Encryptor (HAIPE) devices to a DoD network.

“The HAIPE devices, introduced because of the National Security Agency’s anticipatory development, will greatly increase the ability to bring secure, net-centric capabilities to the Intelligence Community and DoD operations,” he said.

BT has consistently said it could not be held responsible for what anybody did with the communications infrastructure it supplied.

“BT can categorically state that the communications system mentioned in Reprieve’s complaint is a general purpose fibre-optic system. It has not been specifically designed or adapted by BT for military purposes. BT has no knowledge of the reported US drone strikes and has no involvement in any such activity,” said a spokesman for BT in response to questions earlier this year.