Panther AI SOC platform ‘closes loop’ on security Ops

Panther Labs (hereafter just Panther) describes itself as a complete AI security operations centre (SOC) platform that is essentially characterised by its ability to scale security the whole operations lifecycle.

The technology works by virtue of AI agents embedded natively across Panther’s data lake, detection engine and “organisational knowledge” foundation, which investigate alerts, act autonomously and feed every decision back into the system.

The company says that, unlike “AI overlay tools” which are disconnected from the underlying data and detection logic, the closed-loop architecture here turns every investigation into compounding intelligence.

Beyond AI overlay tools

For the record, an AI security overlay is a software services layer designed to integrate with existing software codebases and wider systems in order to deliver real-time protection and monitoring using machine learning to pinpoint, identify and detect anomalies. This technology is also capable of redacting sensitive data that may exist in prompts as well as blocking prompt injection threats. Viewed by some as a shortcut, this technique enables software teams to up their security consideration without a complete infrastructure overhaul.

For the record, an AI security overlay is a software services layer designed to integrate with existing software codebases and wider systems in order to deliver real-time protection and monitoring using machine learning to pinpoint, identify and detect anomalies. This technology is also capable of redacting sensitive data that may exist in prompts as well as blocking prompt injection threats. Viewed by some as a shortcut, this technique enables software teams to up their security consideration without a complete infrastructure overhaul.

Panther’s approach is different; the company says it puts AI agents embedded natively across the security operations lifecycle in what it says is a move to democratise senior-level expertise, accelerate critical SOC workflows and consolidate siloed tools and scattered context.

The Panther AI SOC Platform is said to be a security operations built around a closed loop i.e. AI agents don’t just investigate alerts, they continuously learn the patterns and risk profile of an organisation… and this means that they improve over time.

Evolution of the modern security stack

This is not all about adding more tools, more analysts and faster triage and that’s because, according to Pather founder and CEO Jack Naglieri, the modern security stack wasn’t designed.

It accumulated.

“Dozens of tools exist, each with its own narrow view of the environment, each missing the context that would make it truly effective. Panther’s agents have native access to the data lake, detection engine and a firm’s organisational knowledge, giving them the full context needed to investigate thoroughly, act autonomously and incorporate every outcome back into the platform,” noted Naglieri and team, in line with this announcement.

He suggests that as AI takes on more of the investigative work, the nature of security operations shifts. This means that detections evolve from signals written for human analysts into living logic that guides AI reasoning. The result is a SOC that gets measurably smarter over time: detection accuracy improves, alert volume declines and security expertise scales across the entire team.

“For years, the industry treated the SOC’s core challenge as a scale problem. But scale was never the real constraint. The SOC has always demanded human judgment – knowing which signals matter, knowing what context to pull and where to find it, making the right call on a borderline alert. That expertise just didn’t scale, until now. Today, analysts aren’t doing the work. They’re guiding it. Every decision they make gets encoded back into the platform, so the system learns how your team thinks and gets measurably smarter over time. That’s what closing the loop means,” said Naglieri.

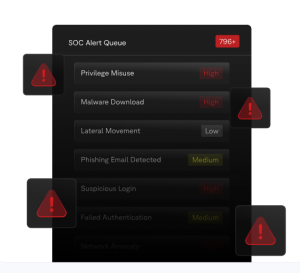

Key capabilities include AI Alert Triage Agent, a service built to autonomously investigate alerts by drawing on all available context – the data lake, historical alerts, detections etc. to deliver a clear risk classification with transparent reasoning. The agent learns the unique patterns and risk profile of each customer’s environment, auto-resolving noise and escalating only what matters.

Closed-loop detection tuning

Every triage outcome becomes a label that automatically tunes detection logic over time. Closed-loop detection tuning means alert volume doesn’t just get triaged faster, it shrinks. Investigation outcomes feed directly back into detection rules as reviewable code, so the system gets measurably smarter with every decision a team makes.

Every triage outcome becomes a label that automatically tunes detection logic over time. Closed-loop detection tuning means alert volume doesn’t just get triaged faster, it shrinks. Investigation outcomes feed directly back into detection rules as reviewable code, so the system gets measurably smarter with every decision a team makes.

Also, here we find an AI Detection Builder, which converts threat hypotheses described in natural language into production-ready Python detections, delivered as GitHub pull requests with human review required before deployment. The output is real code in a real CI/CD pipeline.

Moving onward to Panther’s Proactive Threat Coverage, this enables users to drive scheduled AI runs that analyse telemetry across the full data lake to surface threats beyond what pre-written rules cover, identifying gaps before they become incidents.

“Because Panther owns both the data lake and the detection engine, findings convert directly into production detections through the same closed-loop workflow. Conversational Investigation services offer natural language queries across all normalised log sources, with the ability to reference detection logic directly – not just raw events. Analysts investigate incidents, hunt threats and build detections without writing a query,” notes Naglieri and team.

Context Assembly via Model Context Protocol (MCP) means every investigation automatically pulls context from identity providers, ticketing systems, code repositories and internal documentation – the environmental knowledge that lives outside any single security tool. MCP gives Panther’s AI agents the same cross-functional awareness that a senior analyst builds over years on the job.

Every action logged

Controlled Automation means high-confidence, so benign alerts close automatically with full audit trails. Detection improvements deploy through existing approval workflows. Every automated action is logged, reviewable and auditable, so it is designed for the trust requirements CISOs demand before granting AI operational authority.

Python Detection-as-Code means detections written in the language AI models understand best. Python-based detection logic, a SQL-queryable security data lake and structured schemas give AI agents the ability to read, reason about and propose specific changes to detection rules.