CI/CD series: What drives continuum in software?

Software used to shut down.

Users would boot up applications and wrangle about with their various functions until they had completed the tasks, computations or analyses that they wanted — and then they would turn off their machines and the applications would cease to operate.

Somewhere along the birth-line that drove the evolution of the modern web, that start-stop reality ceased to be.

Applications (and in many cases the ancillary database functions and other related systems) that served them became continuous, or always-on.

Where applications weren’t inherently always-on in the live sense, the connected nature of the mothership responsible for their being would be continuously updating and so whenever the user connected a ‘live pipe’ to the back-end, then the continuum would drive forward to deliver the updates, enhancements, security refreshes and other adornments that the app itself deserved.

This, we now know as Continuous Integration & Continuous Delivery (CI/CD).

CI/CD reality

The reality of CI/CD today is that it has become an initialism term in and of itself that technologists don’t spell out in full when they speak out loud, like API, like GUI… or even like OMG or LOL, if you must.

But as simple as CI/CD sounds in its basic form, there are questions to be answered.

We know that CI/CD is responsible for pushing out a set of ‘isolated changes’ to an existing application, but how big can those changes be… and at what point do we know that the isolated code is going to integrate properly with the delpoyed live production application?

A core advantage gained through CI/CD environments is the ability to garner immediate feedback from the user base and then (in theory at least) be able to continuously develop an application’s functionality set ‘on-the-fly’, so CI/CD clearly has roots in Agile methodologies and the extreme programming paradigm.

But CI/CD isn’t just Agile iteration, so how are the differences highlighted?

Do firms embark upon CI/CD because they think it’s a good idea, but end up falling short because they fail to create a well managed continuous feedback system to understand what users think?

Does CI/CD automatically lead to fewer bugs? How frequent should frequency be in a productive CI/CD environment and how frequent is too frequent? Can you have CI/CD without DevOps? Is CI/CD more disruptive or less disruptive?

TechTarget reminds us that the GitLab repository supports CI/CD and can help run run unit and integration tests on multiple machines as it splits builds to work over multiple machines to decrease project execution times… is this balancing act a key factor of effective CI/CD delivery?

CI/CD contrasts with continuous deployment (despite the proximity), which is a similar approach in which software is also produced in short cycles but through automated deployments rather than manual ones… do we need to clarify this point more clearly?

How do functional tests differ fro unit tests in CI/CD environments… and, while we’re asking, if development teams use CI/CD tools to automate parts of the application build and construct a document trail, then what factors impact the wealth and worth of that document trail?

CWDN CI/CD series

The Computer Weekly Developer Network team now sets out on a mission to uncover the depths, breadths, highs, lows and in-betweens where CI/CD practices and methodologies are today.

In a series of posts following this one we will feature commentary from industry software engineers who have a coalface understanding of what CI/CD is and what factors are going to most prevalently impact its development going forward.

We look at how organisations are shifting to continuous integration and continuous deployment to deliver new software powered functionality to the business. What are the common tools being used? How do organisations get started? What are the pitfalls? How much is enough application to go-live and then continuously build upon?

These (and more) are the CI/CD questions we will be asking and we hope that you dear reader will come back again and again for updates… continuously.

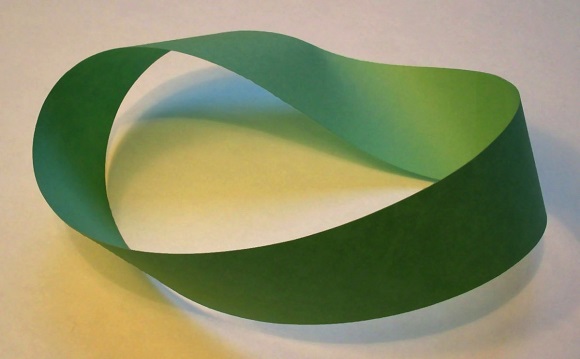

Image: Wikipedia