API series - Salt Security: Unified monitoring of APIs for seasoned security

This is a guest post for the Computer Weekly Developer Network written by Nick Rago in his capacity as field CTO at Salt Security – a company known for its specialist skills related to API security.

Describing himself as a technology enthusiast, entrepreneur, startup veteran, pro bono skier and a full stack developer in the API management & security space.

To achieve unified API monitoring, organisations must first ensure that they have an accurate API inventory, this much we already know.

A large number of companies don’t even know the number of APIs that exist within their environment. According to the Salt Security Q3 State of API Security report, 86% of organisations lack confidence that their API inventory is complete.

Rago writes as follows…

This [API} awareness gap can be partially attributed to the increasingly rapid pace of API development as a result of digital transformation adoption. With developers under pressure to make API changes more frequently and push new APIs out faster and faster, outdated or ‘zombie’ APIs and unknown or ‘shadow’ APIs pose huge concerns for organisations.

If an organisation misses even just one API in its inventory, it is partial remediation. Without insight into all of their APIs, organisations cannot accurately assess their exposure and reduce their risks.

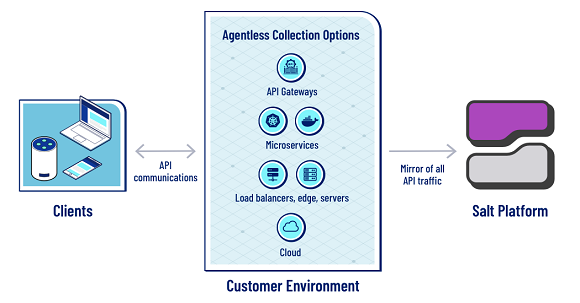

To offer adequate protection, security solutions must deliver full visibility for ALL APIs – front end and back end. Moreover, organisations need a solution that can provide an accurate inventory that can be continuously and automatically updated. It is also important to recognise that visibility goes beyond just knowing that an API exists. It is important to understand the full context of an API, such as its purpose, how and where it is being used, the type of data it handles, how it is structured, and its security posture.

Every API, endpoint, or third-party API represents an extension of an organisation’s attack surface. To offer adequate protection, organisations must continuously monitor all of those APIs, public and private, during runtime to quickly identify patterns of normal versus abnormal behaviors.

Only artificial intelligence (AI)-based solutions can manage this volume within today’s growing API ecosystem, making AI imperative for unified API monitoring. AI can identify behavioral changes across millions of API calls and correlate them over time to spot bad actors. AI can quickly and accurately analyse the large volumes of API traffic to deliver continuous and unified monitoring and the depth of context needed to protect APIs.

Core API management functions

We suggested to Rago that the core API management functions include authentication tools, rate-limiting controllers, analytics functions and ________ what would fill in the blank?

Dedicated API security fills in this blank. As the number and frequency of API-based attacks has risen, the need for dedicated API security has grown exponentially. Our research found that 94% of organisations have experienced an API security incident over the past 12 months. Organisations feel overwhelmed and ill-equipped with traditional security solutions to deal with exploding API attack activity.

Many API attacks today are done by authenticated entities that have authority to use the API they are attacking and are doing it in a low and slow manner that evades any rate limit traffic controls. Existing security measures employed by API gateways, WAFs and identity providers clearly fail to adequately protect this growing attack surface. To stop attacks, organisations need a security strategy specifically designed for APIs. Key capabilities include continuous visibility into the attack surface, as well as the ability to discover new and changed APIs, contextually understand API behaviours to accurately detect and stop API attacks and abuse in runtime, and remediate vulnerabilities in the build phase.

Salt Security’s Rago: APIs today come in many different varieties such as RESTful JSON, RESTful XML, SOAP, GraphQL, JSON-RPC, XML-RPC, GRPC.

Where does API testing sit?

API testing provides value, and companies need testing, scanning and fuzzing during the development phase.

A noticeable gap in automated API testing in today’s pipeline, which typically introduces scans and tests that look for known bad behaviours, has been around the ability to conduct negative function and application logic vulnerability testing specific to each and every API endpoint. This step has previously fallen solely on the developers themselves or manual testers, who have to learn the functional logic of an API endpoint before they can construct a test. As a result, many application logic vulnerabilities and negative behaviours are not uncovered before an API is promoted into production.

Organisations should look to employ API-focused security platforms that include the ability to leverage ML and AI to launch API attacks in test environments, so they can get better coverage to check for endpoint specific business logic gaps as well as coding vulnerabilities.

However, while API security testing can help flush out more endpoint-specific application logic-based vulnerabilities, it can not be expected to find everything. Therefore, testing alone isn’t enough. APIs must be viewed as they are being used. Companies need to see the API exercised to fully understand what’s going on and to identify any gaps in logic flow. API security must cover the entire API lifecycle and include runtime protections.

Because APIs are not just straight code and every API is unique, API business logic vulnerabilities cannot be discovered in development. Most attacks in API security target business logic vulnerabilities, and APIs must be ‘exercised’ – that is, viewed in runtime – to spot logic issues.

We also want to open the discussion to cover API security risks (covering areas such as denial of service attacks and the greater attack surface naturally created by the very existence of APIs) today in the modern IT stack.

Extract or exfiltrate

Typically attackers opt to extract or exfiltrate as much sensitive data as possible when targeting an application and its APIs. Account takeover (ATO) is also another primary goal to obtain persistence and abuse other system users.

However, attackers sometimes will seek to launch denial of service (DoS) attacks to render an application or service entirely unavailable.

The most well-known DoS and distributed DoS (DDoS) attacks are volumetric attacks, where an attacker simply overwhelms network paths and systems with too much traffic for an architecture to handle. APIs do not always impose restrictions on the size or number of resources that can be requested by the client or user, leading to these types of attacks, as well as poor API server or gateway performance.

However, more nefarious are the application-layer DoS and endpoint-specific DoS attack patterns seen today. With the adoption of modern microservice-based application architectures, many back-end functions are now microservices, exposed as API endpoints, and no longer are buried as a ‘function’ within a monolith application’s code. This means that many business functions require multiple API calls or a sequence of API calls to various endpoints from a client to facilitate a certain business function.

This exposure provides an opportunity for attackers to conduct more nefarious application-layer or endpoint-specific DoS attacks, where the attacker is looking to disrupt critical business operations. Rather than focusing on overwhelming a whole network, or server, or API gateway, the attacker will look to find and exploit security and application logic vulnerabilities at the endpoint level, crafting specific requests in a low and slow manner that can slow or break key parts critical portions of the supply chain associated with a business function.

Attackers are aware that many organisations are unable to detect this type of API abuse and are not utilising proper context-based API runtime protection. As a result, the reconnaissance and attack activities for these types of attacks typically goes unnoticed, especially since the attack pattern requires a small handful of requests – or even just one in some cases – to disrupt a key business function and break the digital supply chain an organisation and its customers and partners rely on.

Panoply of communication protocols

The most common architectures for APIs are REST and SOAP, both of which technologies work to define a standard communication protocol specification for XML-based message exchange… so what should API users, developers and architects consider in this space?

APIs today come in many different varieties such as RESTful JSON, RESTful XML, SOAP, GraphQL, JSON-RPC, XML-RPC, GRPC. To further complicate things, many organisations today and the applications they continue to support or are now building are utilising multiple API types at once. One of the most important things an organisation can do that benefits everyone – users, developers, architects, security, compliance, operations – is to ensure all APIs are properly and accurately documented and accounted for. Organisations should adopt a spec-first development mindset for APIs (meaning utilising Swagger/OAS), and work to make sure all APIs are represented and documented in some type of developer portal or service catalogue.

Not having an accurate source of truth of all APIs in play is something many organisations are struggling with today. A proper API inventory not only helps developers re-use existing services and stop shadow API development, but provides critical insights for others in the organisation as well: What are my APIs that are out there today? What is its purpose and function? Where is it being used (internal, external, etc)? What types of data does it touch for a data classification standpoint? Is it adhering to best practices from a security standpoint?

For organisations that have many APIs and underlying endpoints exposed today without a good handle on documentation or inventory, hope is not lost. API discovery technology exists today to help those organisations discover, inventory and document APIs that are in use in their org.”