IT modernisation series – Coralogix: A thoroughly modern two-pronged approach to log analytics

Unlike digital-first organisations, traditional businesses have a wealth of enterprise applications built up over decades, many of which continue to run core business processes.

In this series of articles we investigate how organisations are approaching the modernisation, replatforming and migration of legacy applications and related data services.

We look at the tools and technologies available encompassing aspects of change management and the use of APIs and containerisation (and more) to make legacy functionality and data available to cloud-native applications.

This post is written by Ariel Assaraf, CEO of log analytics company Coralogix.

Assaraf writes as follows…

With the cloud enabling small and medium companies to easily scale their operations and the DevOps movement taking over with its CI/CD approach, a proper and formalised approach to application [log file] logging is becoming a critical component of any company’s success. But with all the tools that engineers have to operate, how can they master logging tools, leverage their benefits and build solid processes?

This struggle is one of the key catalysts for companies to fall in love with open source based monitoring.

Battle of the titans

When looking at the engineering community, there are two clear schools of thought: One wants the high end, proprietary software with out-of-the-box capabilities and support. The other is more DIY oriented and wants to use open source for its benefit.

In the world of logging and monitoring, this battle is reflected by the two dominant players in the market – Splunk and Elastic.

Larger corporations and more traditional companies prefer Splunk due to its vast experience deploying on-premise for enterprise clients and its out-of-the-box installation and capabilities. Conversely, tech companies and startups seem to prefer the ELK stack with its flexibility and flourishing community.

The advantages of open source for fast-growing companies are clear; easier scale, lower price, no commitments with vendor lock-in and most importantly, easier onboarding for new employees. Almost any engineer you ask is either already familiar with or can easily learn the Elastic syntax and how to operate Kibana.

With that though, comes the challenge of maintaining and securing your open source logging stack, which can be painful for companies that lack resources or are in a constant manpower shortage.

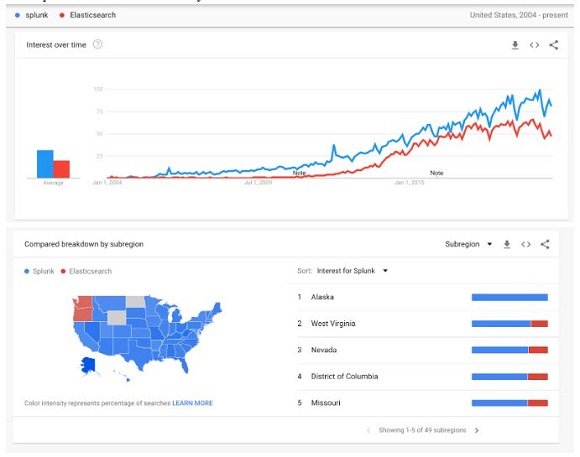

The following Google Trends report shows the search trends for Splunk and Elasticsearch and their geography. It’s interesting to note that Splunk is more popular in almost every single state in the U.S., but California and Washington, both known as startup hubs, are dominated by Elasticsearch.

This need of open source tools combined with the struggle to maintain and secure them led to a new approach that we now see everywhere: commercial companies offering open source solutions as a service with the goal of removing the burden of maintenance and security, adding required capabilities, and providing product and technical support.

The hybrid approach

Speaking about Coralogix if I may be so bold in conclusion, we’ve adopted a two-pronged approach.

One is a proprietary product including anomaly detention, version benchmarks, a logging-oriented search interface and ML empowered user-defined alerts. The second is a fully-hosted, scaled and secured enterprise-grade ELK stack with Grafana so that engineers can collect, parse, search and visualize their data like they are used to, or even use APIs to pull data and build their own analytics.