What’s new in SQL Server 2014

What is the advantage of the new in-memory database engine, when SQL Server, in tandem with the operating system, will cache data in memory anyway? The answer is that the traditional disk-based model of data access is inefficient, even when the disk is cached in random access memory (RAM), since it uses a model where data is constantly copied from storage for processing and copied back for durability.

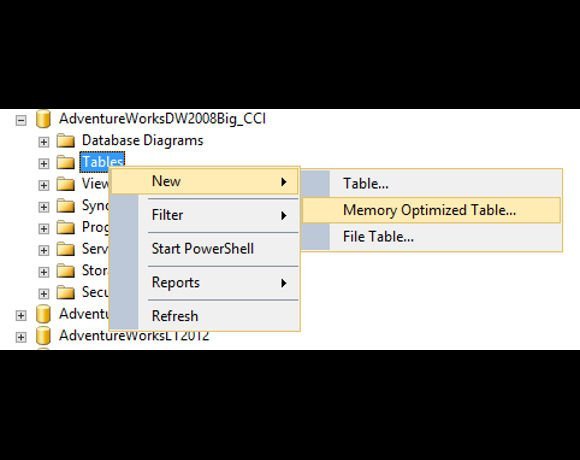

The new engine, by contrast, has direct access to the data and uses optimistic concurrency control to minimise locking. Data is streamed to disk in the background, and the engine still fully conforms to ACID (atomicity, consistency, isolation, durability) for reliable transactions. There is an option to give up durability for an even bigger speed boost. Indexes are held only in memory and rebuilt when the database loads.

A further twist is that stored procedures are compiled to native code, which has particular performance benefit given that data is accessed directly in memory. Memory-optimised tables can co-exist with disk-based tables in the same database, making it easy to integrate them, and users can query across both. The speed gain delivered by in-memory OLTP is remarkable. Microsoft claims up to 30 times improvement, and although Computer Weekly only saw around half that in tests, it is still most worthwhile.