Laurent - stock.adobe.com

IT leaders grapple with workforce skills gap as they deploy AI and digital technology

Organisations will have to find new ways of reskilling their workforce as they get to grips with artificial intelligence and other digital technologies. CIOs also need to keep their skills updated if they are to succeed

CIOs and leaders in digital technology are predicting significant growth in their use of artificial intelligence (AI), automation and the internet of things (IoT) in their businesses over the next two years.

But they face challenges scaling the technology across their organisations as they grapple with a workforce and university and school leavers who lack the digital skills businesses need, research by Deloitte has revealed.

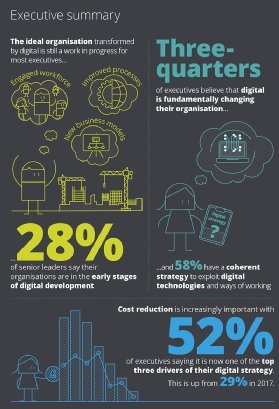

The number of organisations that say they have reached a state of maturity with digital technology is growing, but it’s a journey, with 28% still in the early stages of development, according to the survey of more than 150 digital leaders in the UK’s largest companies and public sector organisations.

The results showed that more CIOs and chief digital officers felt confident in their capabilities, compared with this time last year, but two in five leaders at organisations that are in the early stages of deploying digital technology said they did not yet feel ready to develop a digital strategy for their organisations.

Becoming a confident digital leader

The best digital leaders, the research showed, take time out from their day-to-day work to learn about the latest technologies.

They read about digital technology, listen to podcasts on their commute to work and buy the latest consumer gadgets for the family to try them out, said Deloitte partner Oliver Vernon-Harcourt.

“Confident leaders show a propensity to self-learn naturally, seeking out the content and training available within their own organisation, reading non-traditional media and being a bit more explorative in their thirst for knowledge,” he told Computer Weekly.

It is easy for busy executives to lose sight of the problems they are trying to solve when their time is taken up developing operating models, job descriptions and plans.

Vernon-Harcourt advised CIOs to “fall in love with the problem” rather than with the solution, claiming it was surprising how quickly people could make an impact. “By pushing yourself to imagine the ideal experience, you will be surprised how close you can get to it right now,” he said.

Thinking machines

The survey found technology leaders rate AI as the most important digital technology for their organisations after cloud, cyber security and data analytics.

Over 40% of executives have already invested in AI, and a similar number expect to invest in the technology over the next two years.

Companies are typically experimenting with AI by buying customer relationship management (CRM) software and enterprise resource planning (ERP) systems with built-in AI capabilities, allowing them to try the technology out at a low initial cost with little risk.

They plan to use AI to improve the services they offer to customers through “mass personalisation”, and to improve the efficiency of their internal business processes and operations.

AI is still experimental, with 21% of organisations identifying use cases and 43% running production pilots, but very few choosing to scale AI across multiple functions and business areas.

Despite its importance, almost half of digital leaders did not believe their leadership team had a clear understanding of AI or its impact on the enterprise.

Deloitte advises people responsible for developing digital technology strategies to actively learn about the different types of AI available and how they could be used to benefit their organisations.

Although it is still early days, digital leaders need to think about the ethical implications of artificial intelligence technology. Organisations use large datasets to train their AI, and if those datasets have a built-in bias, it is likely the AI will have the same bias.

Most organisations have policies in place to address ethics, and they can be adapted naturally to cover artificial intelligence.

“Conversations must involve not just the teams working with the technology, but also representatives from across the workforce and the customer base that will be affected,” said Vernon-Harcourt.

Why organisations need to reskill their workforce

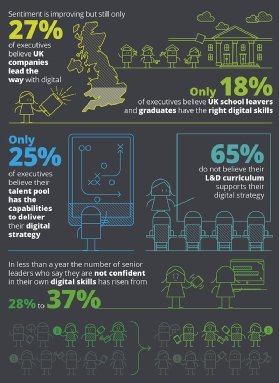

Many organisations say there is a shortage of school leavers and graduates entering the workforce with the digital skills and experience that organisations need.

“The challenge is not just for business, but also for education facilities, as it’s very difficult to continually update a curriculum,” said Oliver Vernon-Harcourt.

With the half-life of a typical business competency at around five years, and the half-life of a technical skill at two-and-a-half years or less, organisations will need to develop ways of helping their workforce keep their skills up-to-date.

Vernon-Harcourt argued that leaders should encourage the workforce to approach learning throughout their career and communicate the importance of life-long learning,” he said.

The key is to make sure learning and development is embedded into the company culture and make training materials easily available, said Vernon-Harcourt.

“Leaders need to act as role models to create a culture with learning at its heart. It’s not just a case of telling your team that it’s important to learn more about new technology, but also to share what you have been learning yourself to really build that culture,” he added.

Just one in four digital leaders said their current workforce had the capability to execute their digital strategy, and 65% said their company’s learning and development programme did not support their digital strategy.

Deloitte advises companies to find the skills they need in other ways. That could mean building networks of “gig workers” who can be called in when needed, or putting out work to crowdsourcing or third party organisations, to give them the ability to respond rapidly to changes.

Organisations should consider working with innovative startups, said Vernon-Harcourt. But he emphasised the importance of selecting companies that can assist with your firm’s strategy. In some cases, business collaborations with startups have turned into a PR exercise, he said.

Why you need to think about ethics if you are deploying AI

If something is unethical in the analogue world, it will be unethical in the artificial intelligence (AI) world, writes Matthew Howard, director of artificial intelligence at Deloitte.

Ensuring that ethical standards are adhered to is essential. Rather than inhibiting AI adoption, ethical and regulatory frameworks should guide us on how to develop and manage solutions that are actually sustainable and scalable in a way that meets stakeholder expectations.

While for many “low-risk” machine learning applications, such as online consumer music or film recommendations, an unsupervised continuous learning model may be the best commercial approach, robust control mechanisms and regulatory frameworks are vital for “high-risk” applications, such as clinical triage, judicial decisions or autonomous vehicles.